If I subtract 2 StereoDepth frames from each other how to output in OpenCV

I subtract the depth frame from 0's in the model:

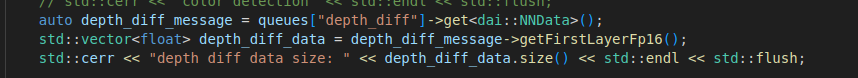

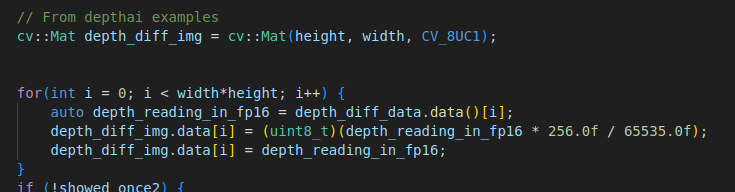

So it is just outputting the same depth frame values.I get the the NNData from the queue and check that the size is the same as the expected 1632x960 and it is

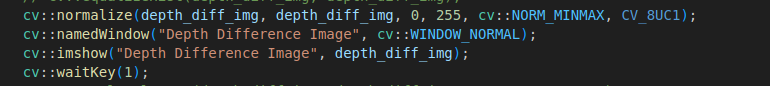

I create a cv::Mat and then iterate through the floats, normalize to 0-255 and save as uint8_t

I then normalize and and display

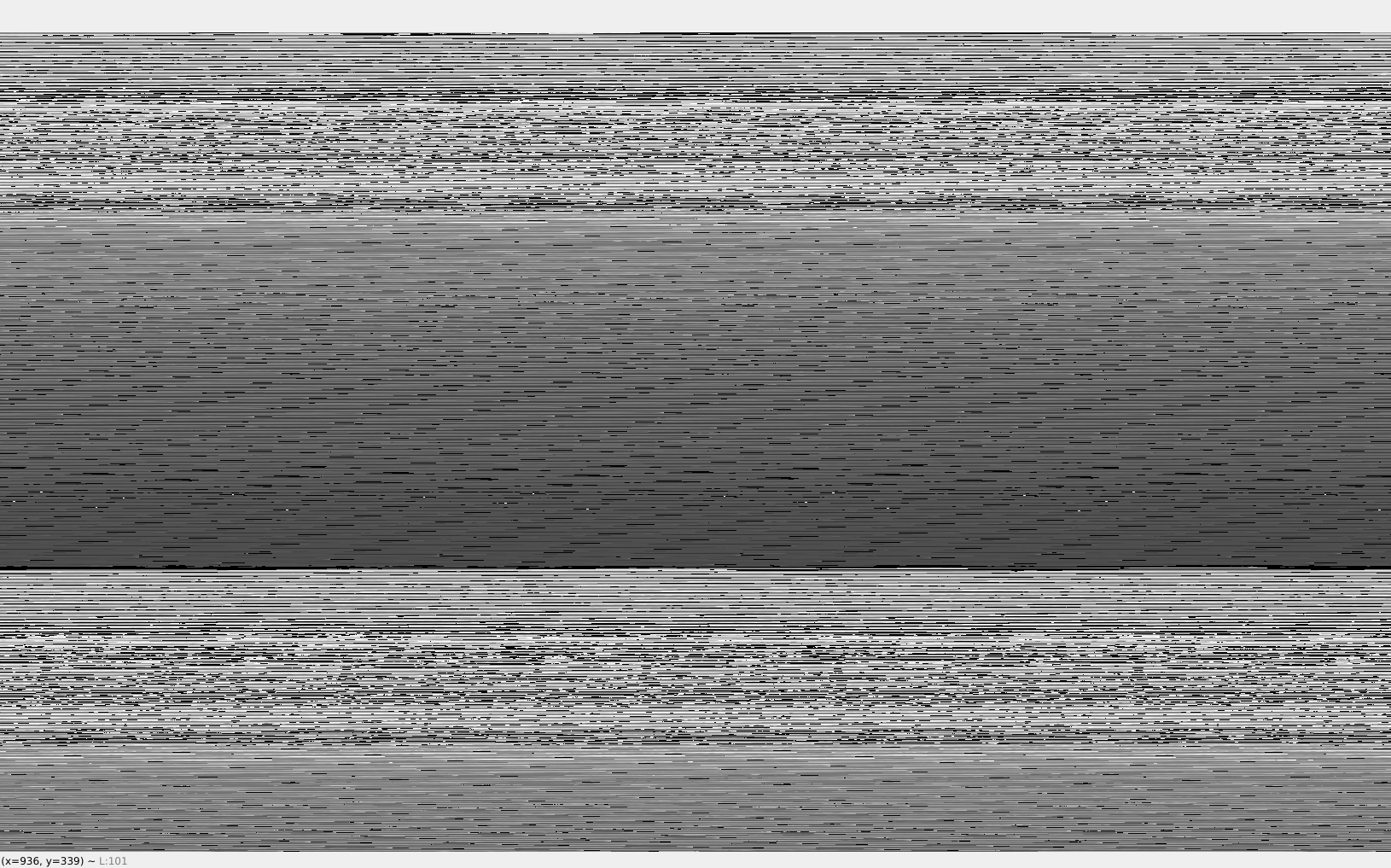

And what shows up:

It is maddening

- Edited

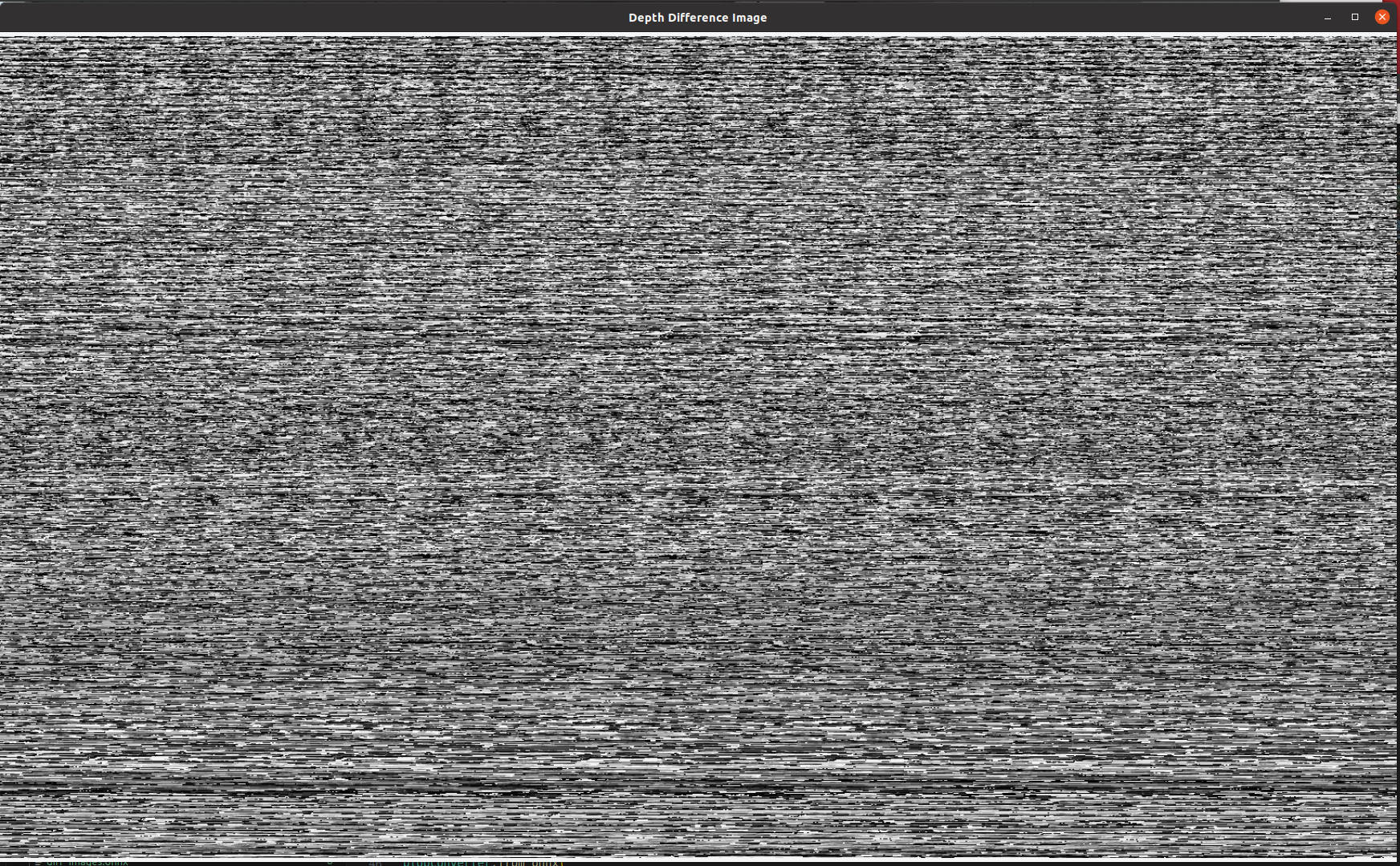

Rewrote it in python and now there is a bit of an actual outline of me in my seat in the middle of what is showing up

But I realize that I was reshaping it the wrong way and changed it to reshape how it was in the example and got it scrambled:

Now I am trying to figure out why when I messed up the reshaping was it the closest it was to looking accurate

Why the heck can I make out an image barely of my hand when I scramble the reshape

- Edited

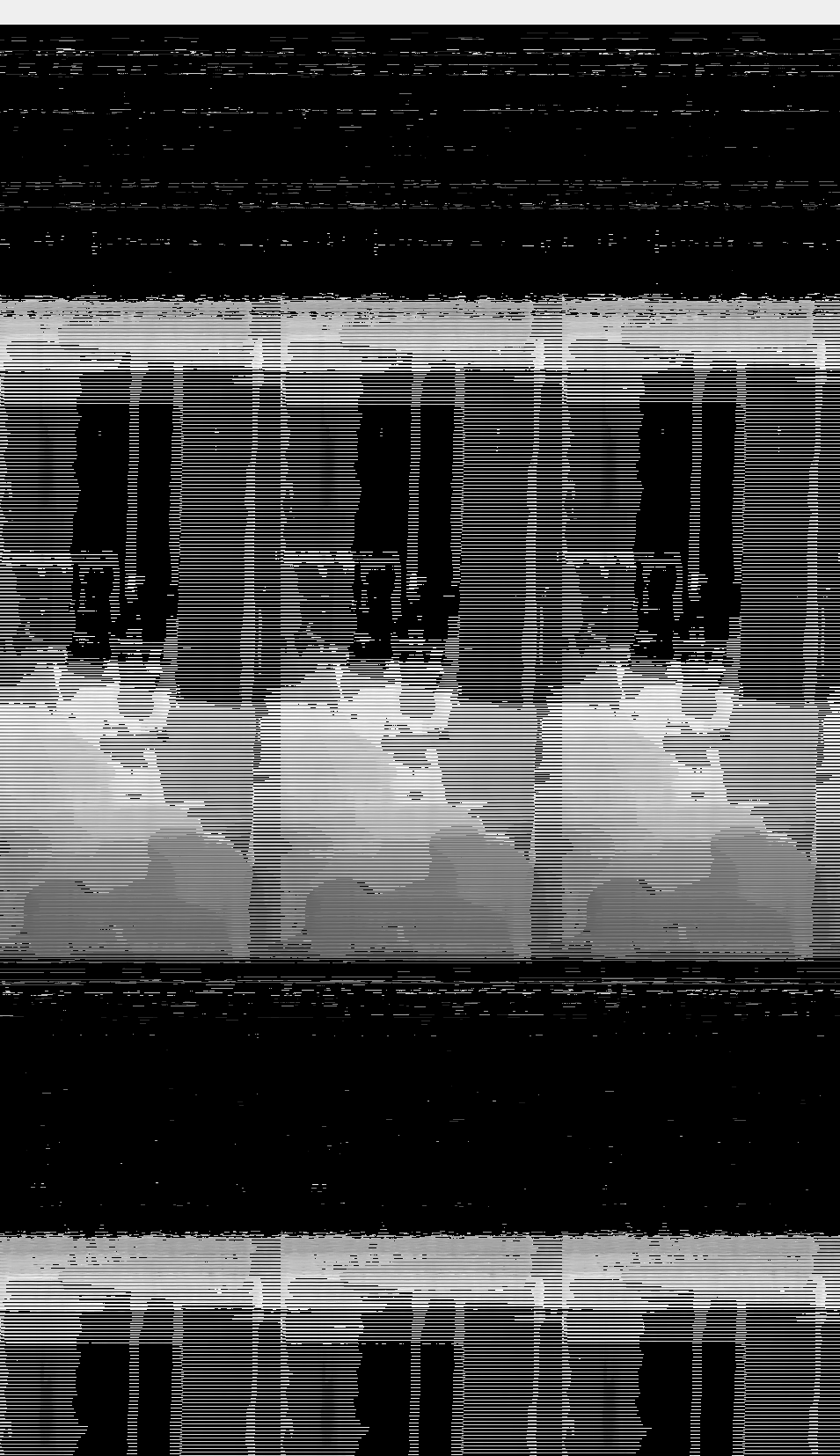

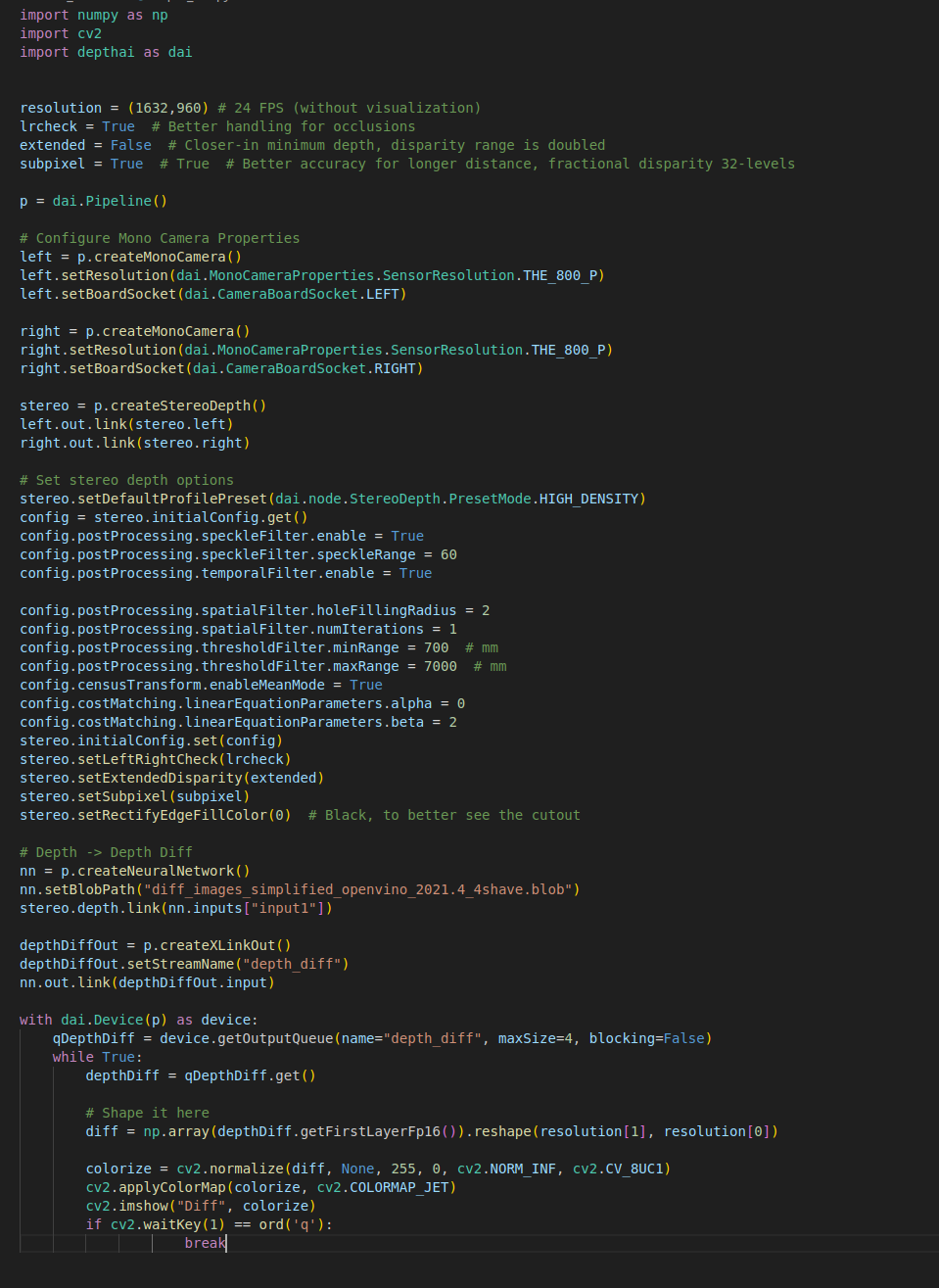

@erik This is the depthai code:

import numpy as np

import cv2

import depthai as dai

resolution = (1632,960) # 24 FPS (without visualization)

lrcheck = True # Better handling for occlusions

extended = False # Closer-in minimum depth, disparity range is doubled

subpixel = True # True # Better accuracy for longer distance, fractional disparity 32-levels

p = dai.Pipeline()

# Configure Mono Camera Properties

left = p.createMonoCamera()

left.setResolution(dai.MonoCameraProperties.SensorResolution.THE_800_P)

left.setBoardSocket(dai.CameraBoardSocket.LEFT)

right = p.createMonoCamera()

right.setResolution(dai.MonoCameraProperties.SensorResolution.THE_800_P)

right.setBoardSocket(dai.CameraBoardSocket.RIGHT)

stereo = p.createStereoDepth()

left.out.link(stereo.left)

right.out.link(stereo.right)

# Set stereo depth options

stereo.setDefaultProfilePreset(dai.node.StereoDepth.PresetMode.HIGH_DENSITY)

config = stereo.initialConfig.get()

config.postProcessing.speckleFilter.enable = True

config.postProcessing.speckleFilter.speckleRange = 60

config.postProcessing.temporalFilter.enable = True

config.postProcessing.spatialFilter.holeFillingRadius = 2

config.postProcessing.spatialFilter.numIterations = 1

config.postProcessing.thresholdFilter.minRange = 700 # mm

config.postProcessing.thresholdFilter.maxRange = 7000 # mm

config.censusTransform.enableMeanMode = True

config.costMatching.linearEquationParameters.alpha = 0

config.costMatching.linearEquationParameters.beta = 2

stereo.initialConfig.set(config)

stereo.setLeftRightCheck(lrcheck)

stereo.setExtendedDisparity(extended)

stereo.setSubpixel(subpixel)

stereo.setDepthAlign(dai.CameraBoardSocket.RGB)

stereo.setRectifyEdgeFillColor(0) # Black, to better see the cutout

# Depth -> Depth Diff

nn = p.createNeuralNetwork()

nn.setBlobPath("diff_images_simplified_openvino_2021.4_4shave.blob")

stereo.disparity.link(nn.inputs["input1"])

depthDiffOut = p.createXLinkOut()

depthDiffOut.setStreamName("depth_diff")

nn.out.link(depthDiffOut.input)

with dai.Device(p) as device:

qDepthDiff = device.getOutputQueue(name="depth_diff", maxSize=4, blocking=False)

while True:

depthDiff = qDepthDiff.get()

# Shape it here

floatVector = depthDiff.getFirstLayerFp16()

diff = np.array(floatVector).reshape(resolution[0], resolution[1])

colorize = cv2.normalize(diff, None, 255, 0, cv2.NORM_INF, cv2.CV_8UC1)

cv2.applyColorMap(colorize, cv2.COLORMAP_JET)

cv2.imshow("Diff", colorize)

if cv2.waitKey(1) == ord('q'):

breakThis is the pytorch code. I no longer subtract from dummy frame and just pass through depth:

#! /usr/bin/env python3

from pathlib import Path

import torch

from torch import nn

import blobconverter

import onnx

from onnxsim import simplify

import sys

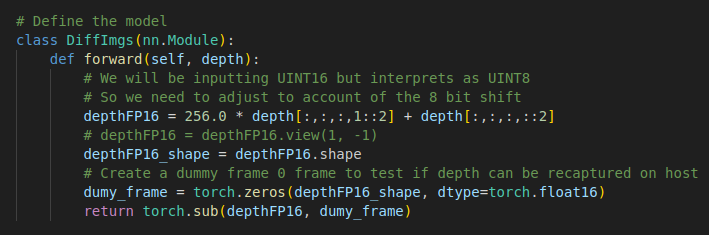

# Define the model

class DiffImgs(nn.Module):

def forward(self, depth):

# We will be inputting UINT16 but interprets as UINT8

# So we need to adjust to account of the 8 bit shift

depthFP16 = 256.0 * depth[:,:,:,1::2] + depth[:,:,:,::2]

return depthFP16

# depthFP16 = depthFP16.view(1, -1)

# depthFP16_shape = depthFP16.shape

# Create a dummy frame 0 frame to test if depth can be recaptured on host

# dumy_frame = torch.zeros(depthFP16_shape, dtype=torch.float16)

# return torch.sub(depthFP16, dumy_frame)

# Instantiate the model

model = DiffImgs()

# Create dummy input for the ONNX export

input1 = torch.randn(1, 1, 960, 1632 * 2, dtype=torch.float16)

input2 = torch.randn(1, 1, 960, 1632 * 2, dtype=torch.float16)

onnx_file = "diff_images.onnx"

# Export the model

torch.onnx.export(model, # model being run

(input1), # model input (or a tuple for multiple inputs)

onnx_file, # where to save the model (can be a file or file-like object)

opset_version=12, # the ONNX version to export the model to

do_constant_folding=True, # whether to execute constant folding for optimization

input_names = ['input1'], # the model's input names

output_names = ['output'])

# Simplify the model

onnx_model = onnx.load(onnx_file)

onnx_simplified, check = simplify(onnx_file)

onnx.save(onnx_simplified, "diff_images_simplified.onnx")

# Use blobconverter to convert onnx->IR->blob

blobconverter.from_onnx(

model="diff_images_simplified.onnx",

data_type="FP16",

shaves=4,

use_cache=False,

output_dir="../",

optimizer_params=[],

compile_params=['-ip U8'],

)- Edited

That is the resolution of my camRgb preview for person detection that I got from this example:https://github.com/luxonis/depthai-experiments/blob/30e2460557a3209770eb8943db41bc997a423212/gen2-pedestrian-reidentification/api/main_api.py#L22

I think I found what you found. Even if you set a depth preview a certain size, it won't make the image that big. I see now that the biggest that comes out is 1920,1080.

If my camera preview is 1632x960, how can I rgb align so I can find the spatial location of each RGB pixel?

I am having trouble understanding how depthpreview, depth resolution, rgb align, and rgb resolution all play together.

I had at one point added a ValueError for the ML model if the input size was not the same as expected, but apparently that doesn't trigger anything in OpenVINO.

hello Adam, have you succeed in subtracting 2 depth frames in device yet?

Thank you Adam, I have questions about the model 'diff_images_simplified_openvino_2021.4_4shave.blob', is it generated by the pytorch code here?

AdamPolak This is the pytorch code.

Is it still using the dummy input or the depth input here is the result of the subtraction?

AdamPolak def forward(self, depth):

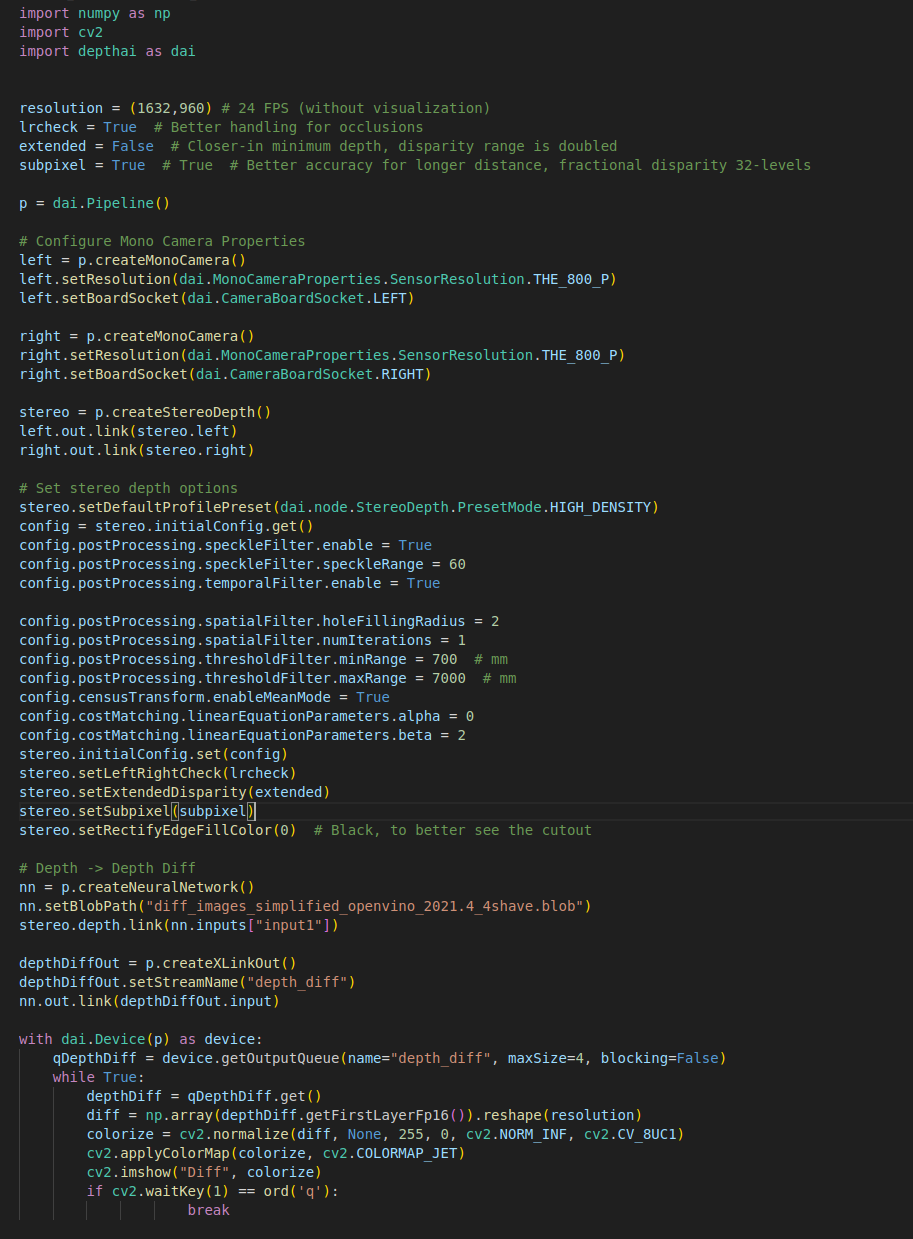

- This is the "final" version to do a diff between 2 depth map images:

#! /usr/bin/env python3

from pathlib import Path

import torch

from torch import nn

import blobconverter

import onnx

from onnxsim import simplify

import sys

# Define the model

class DiffImgs(nn.Module):

def forward(self, img1, img2):

# We will be inputting UINT16 but interprets as UINT8

# So we need to adjust to account of the 8 bit shift

img1DepthFP16 = 256.0 * img1[:,:,:,1::2] + img1[:,:,:,::2]

img2DepthFP16 = 256.0 * img2[:,:,:,1::2] + img2[:,:,:,::2]

# Create binary masks for each image

# A pixel in the mask is 1 if the corresponding pixel in the image is 0, otherwise it's 0

img1Mask = (img1DepthFP16 == 0)

img2Mask = (img2DepthFP16 == 0)

# If a pixel is 0 in either image, set the corresponding pixel in both images to 0

img1DepthFP16 = img1DepthFP16 * (~img1Mask & ~img2Mask)

img2DepthFP16 = img2DepthFP16 * (~img1Mask & ~img2Mask)

# Compute the difference between the two images

diff = torch.sub(img1DepthFP16, img2DepthFP16)

# Square the difference

# square_diff = torch.square(diff)

# # Compute the square root of the square difference

# sqrt_diff = torch.sqrt(square_diff)

# sqrt_diff[sqrt_diff < 1500] = 0

return diff

# Instantiate the model

model = DiffImgs()

# Create dummy input for the ONNX export

input1 = torch.randn(1, 1, 320, 544 * 2, dtype=torch.float16)

input2 = torch.randn(1, 1, 320, 544 * 2, dtype=torch.float16)

onnx_file = "diff_images.onnx"

# Export the model

torch.onnx.export(model, # model being run

(input1, input2), # model input (or a tuple for multiple inputs)

onnx_file, # where to save the model (can be a file or file-like object)

opset_version=12, # the ONNX version to export the model to

do_constant_folding=True, # whether to execute constant folding for optimization

input_names = ['input1', 'input2'], # the model's input names

output_names = ['output'])

# Simplify the model

onnx_model = onnx.load(onnx_file)

onnx_simplified, check = simplify(onnx_file)

onnx.save(onnx_simplified, "diff_images_simplified.onnx")

# Use blobconverter to convert onnx->IR->blob

blobconverter.from_onnx(

model="diff_images_simplified.onnx",

data_type="FP16",

shaves=4,

use_cache=False,

output_dir="../",

optimizer_params=[],

compile_params=['-ip U8'],

)Important to note! This does not take in dynamic image sizes. It must be a certain size. For some reason dynamic dimensions are not supported. So these 2 lines:

# Create dummy input for the ONNX export

input1 = torch.randn(1, 1, 320, 544 * 2, dtype=torch.float16)

input2 = torch.randn(1, 1, 320, 544 * 2, dtype=torch.float16)

Define what size of depth images are coming in. change 320 (height) and 544 (width) to your actual depth image size.

- These lines are what changes the depth input from U8 (1 byte) to U16 (2 bytes):

depthFP16 = 256.0 * depth[:,:,:,1::2] + depth[:,:,:,::2]

The reason is because the depth image comes into the model at U16. We then convert it to U8 when it enters the model. We tell the nn to do that by this compile command: compile_params=['-ip U8']

So the data comes in twice as big because it changes from U16 to U8. It needs twice as many bytes to represent the image. What that operation does is a little trick to turn the U8 data into FP16 data (which is required by the NN). So what that does is it unconverts the input data back from U8 to U16 (in this case FP16).

What is your use case, do you also want to diff a "control depth" from new depth or something else.