Hi @nprasad ,

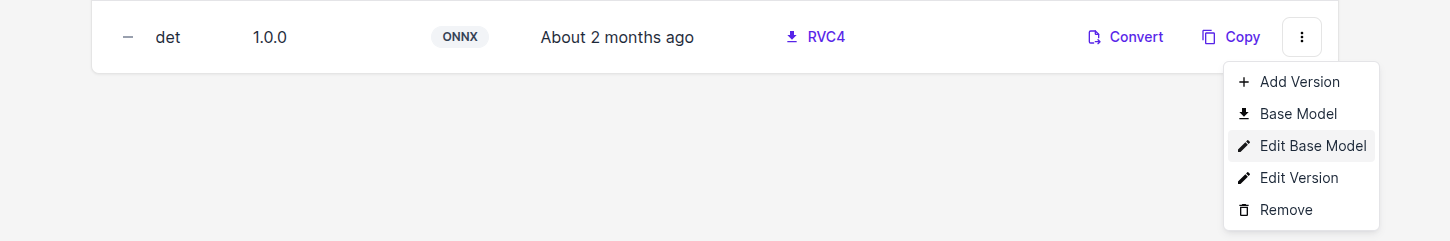

The YOLO conversion path through HubAI is made in a way that it is as automatic as possible. This means that once you upload your .pt file we, depending on auto-detected version, create an ONNX NNArchive from it and then use that as the base for the conversion to device specific format. In the background what is being used for this .pt -> ONNX conversion is our tools package. The resulting ONNX would take an image that needs to be normalized to [0,1] range beforehand. This information is baked into the base model config which you can view by inspeciting it in HubAI - Click the "Edit Base Model" button like on the picture below - you'll see scale=255 and mean=0.

After ONNX NNarchive is created then we need to go to the device specific format. For this modelconverter package is used in the background. And one of the optimizations that we automatically do during this conversion is that we bake in the normalization step directly to the model (it happens here for RVC2 conversion). This is done because when model is used on device with DepthAI the camera frames are in [0,255] range. So this is what model gets on the input and because normalization is now part of the model itself it happens automatically before the data hits the "actual model operations". In your app you don't need to think about this, you just connect camera output to the model input and it works.

And these options during conversion are grayed out because the ONNX NNArchive already has the information about its preprocessing ([0,255]) and based on that we know what to do for the RVC2 model so that it is exported in an optiized way for on-device usage.

Hopefully this shines some light on the process that happens in the background. But if something isn't clear enough let me know.

Best,

Klemen