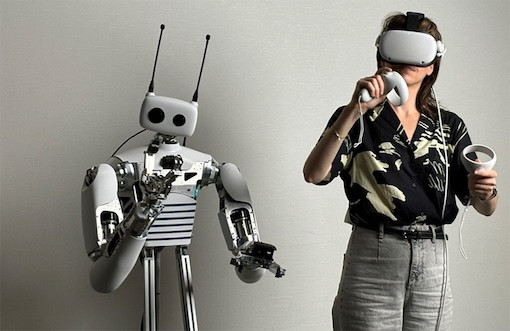

Pollen Robotics is rethinking how we build and teach humanoid robots. Their flagship platform, Reachy 2, is a fully programmable robot designed to interact with the physical world, whether that’s performing tasks, learning by demonstration, or serving as a research platform for human-robot interaction.

What makes Pollen’s approach stand out is their focus on embodied AI: robots that learn by doing, not just from code. At the center of this approach is teleoperation: using VR to control the robot in real time and generate high-quality training data that it will learn from.

But for teleoperation to work well, the robot needs to see like a human, through stereo vision, and with as little delay as possible.

A Human-Centric Vision System Built with Luxonis

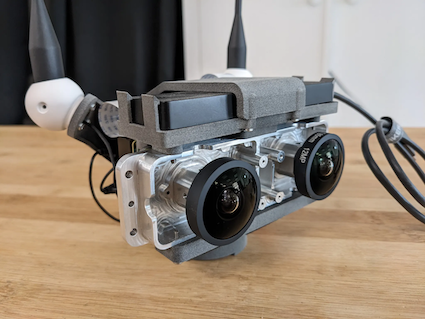

To make this possible, Pollen developed a custom stereo camera rig using the OAK Module FFC-4P platform, featuring:

Two IMX296 RGB sensors

A 64 mm stereo baseline, mirroring the human eye distance

Fisheye lenses for a wide field of view

On-device image processing via DepthAI

This modular setup outputs synchronized 60 FPS video streams, which are stereo-rectified and compressed directly on the device. An H.264 stream is sent via WebRTC to the operator’s headset, while JPEG frames feed into Pollen’s internal ROS-based SDK for additional logging and analysis.

The system delivers a total latency of around 125 ms, enabling smooth and natural VR control even during fast-paced tasks like ping-pong.

Why Luxonis: Modularity and Developer Experience

Pollen chose Luxonis not just for the performance, but for the flexibility and developer-friendliness of the platform.

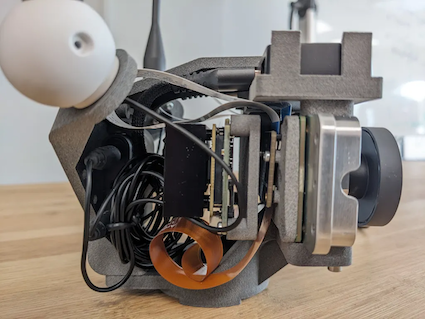

Unlike fixed stereo cameras, Luxonis’ modular FFC series separates the compute board from the sensors, allowing teams to choose the exact lenses, sensors, and stereo baseline they need for their application. This was key for Reachy 2, where every millimeter of camera placement impacts the final experience. Our intuitive manual calibration guide streamlined the depth-sensing setup, allowing Pollen to quickly move through prototyping with scalable solution for future units.

The DepthAI SDK handles key tasks like stereo rectification, compression, and device-side logic, removing the need for extra compute modules or custom firmware. Native support for Python and ROS 2 made integration quick and clean.

This combination of modularity and software simplicity meant Pollen could iterate faster, test new configurations, and move from prototype to production without overhauling their stack.

From Teleoperation to Autonomy

While teleop is the primary focus today, Reachy 2 is already taking steps toward autonomy. Now part of Hugging Face, Pollen has integrated Reachy into the LeRobot framework to support scripted tasks and early autonomous behaviors. These currently run on external compute, but they rely on the same Luxonis stereo vision setup to perceive the environment.

Pollen Robotics is building a new kind of robot: open, adaptable, and built to learn from the world around it. With Luxonis providing reliable, low-latency stereo vision, Reachy is better equipped to understand its environment and evolve with it.

Whether you’re developing research tools or building the next generation of robotic assistants, modular perception systems and fast integration make all the difference. We’re excited to support Pollen’s work and to see where Reachy goes next.

🔗 Explore Reachy 2

📸 Learn more about Luxonis FFC-4P