Depth estimation is a core capability for many real-time perception systems, from robotics and autonomous machines to smart cameras and spatial AI applications. Traditionally, developers have had to choose between classical stereo algorithms and deep neural networks—each with clear strengths, but also significant drawbacks.

Neural-Assisted Stereo (NAS) bridges this gap. It is a hybrid depth estimation method that combines the speed and efficiency of classical Semi-Global Matching (SGM) with the robustness and high fill-rate of LENS (Luxonis Edge Neural Stereo). The result is a fast, stable, and high-quality depth solution optimized for real-time deployment.

Neural-Assisted Stereo Approach

Neural-Assisted Stereo (NAS) addresses these challenges by leveraging the speed and efficiency of classical SGM together with the high fill-rate and improved accuracy of a neural network. Rather than replacing classical stereo, NAS enhances it with neural guidance, achieving a balanced and practical solution for real-time depth estimation.

Key Features and Technology

The NAS Architecture

Low-Resolution Neural Disparity Map Generation

The input stereo images are downscaled (for example, to 384 × 240 pixels) and processed by a custom neural network called the LENS-Nano model (LENS - Luxonis Edge Neural Stereo). This network generates a low-resolution disparity map that captures the global structure of the scene.

Pattern Injection

The low-resolution disparity map is injected into the original high-resolution left and right images as an artificial pattern. This pattern provides guidance for the subsequent classical SGM algorithm.

Smart Injection Algorithm

A custom algorithm determines where the artificial pattern should be injected based on local image texture strength. This prevents the low-resolution neural output from covering or degrading small objects that might otherwise be missed.

Final Matching

The classical SGM algorithm performs matching using the modified stereo images, resulting in a more accurate and stable disparity estimation.

Result

This hybrid approach achieves:

Parameters

- Inputs - Left Rectified Image, Right Rectified Image

- Outputs - Depth Map, Disparity Map, Confidence Map

- Neural Model - Custom LENS-Nano model

- Model Tuning - Parameters were tuned on an internal Luxonis dataset of 50,000 images to optimize performance across various scenarios.

Performance and Strengths

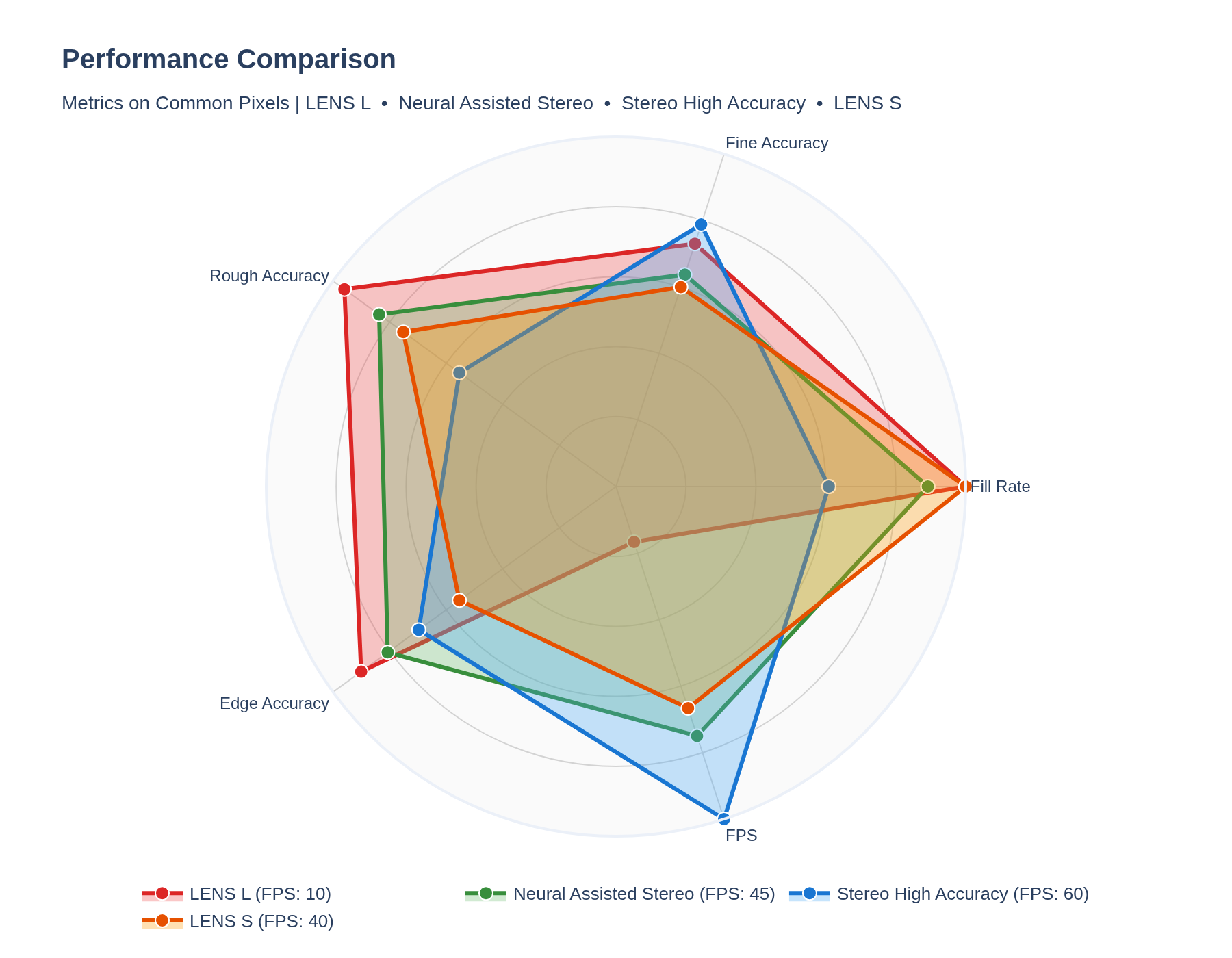

The NAS method provides significant advantages over traditional and pure neural network approaches:

- Improved Quality and Fill-Rate: Higher quality and fill-rate compared to classical SGM - especially with textureless surfaces (white walls), repetitive patterns (fences), or reflective surfaces (shiny floors).

- High Speed: Operates at up to 45 Frames Per Second (Fps) at full resolution, compared to approximately 10 Fps for LENS-Large variant of neural network solution.

- Reduced Hallucination: Lower hallucination rate than classical stereo on high-accuracy and high-density settings (significantly reduces large artifacts, known as the "Disparity Bad 10 metric").

- Small Object Preservation: Better performance than pure neural networks in small object detection applications due to operating at a higher fill-rate and preserving original object details, unlike classical neural stereo which tends to blur objects.

Use Case

The Neural-Assisted Stereo method is ideal for applications that require both high fill-rate and high frame-rate performance. Its improved stability and reduced number of large artifacts make NAS a robust solution for real-time depth sensing across a wide range of operational environments.

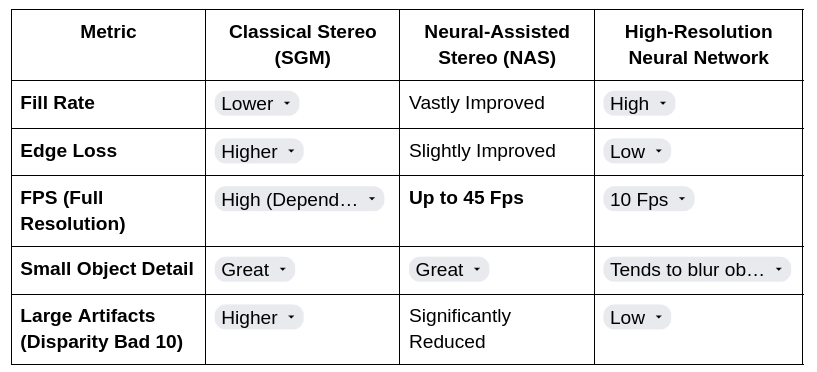

Figure : Radar chart comparing RVC4 depth estimation methods. Metrics include

- Fine Accuracy: percentage of pixels with high precision relative to GT

- Rough Accuracy: percentage of pixels with acceptable accuracy

- Edge Accuracy: error measuring the preservation of object boundaries.

- Frame Rate Per Second (FPS)

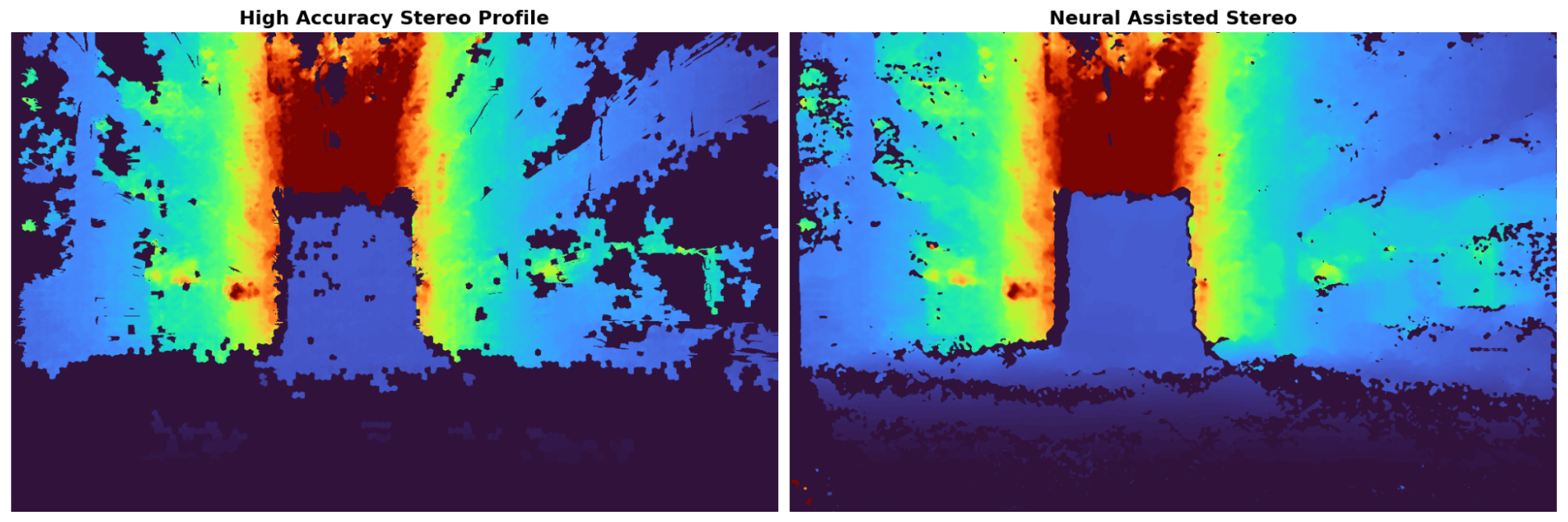

The scene was taken in a warehouse:

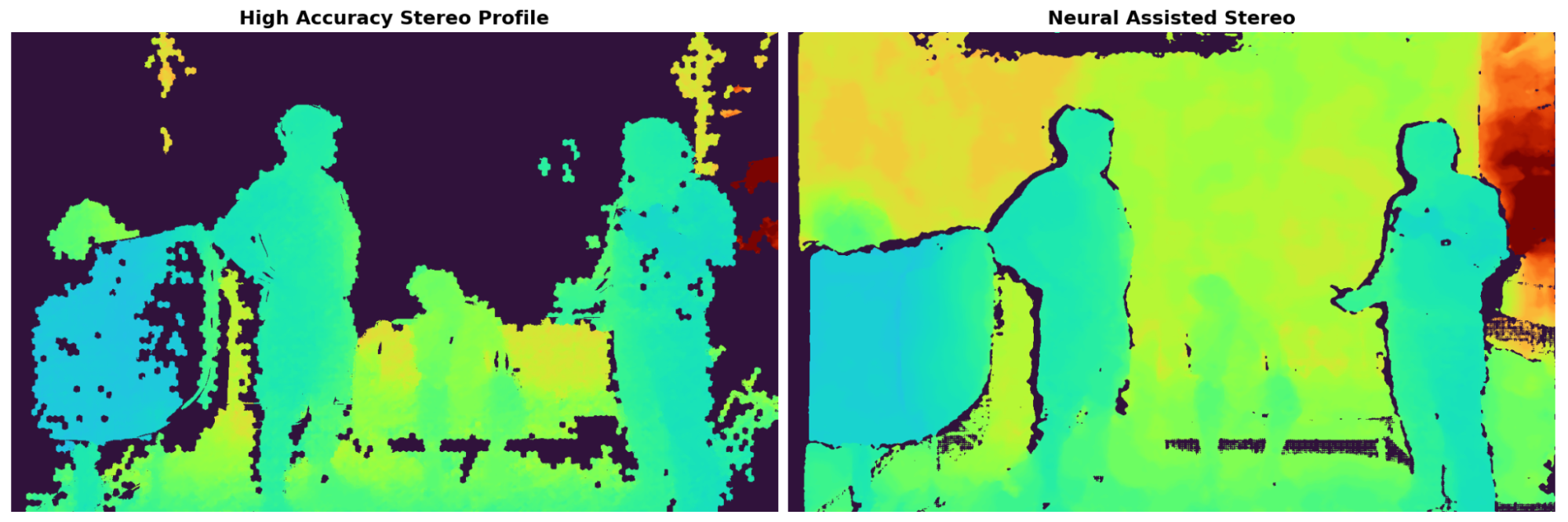

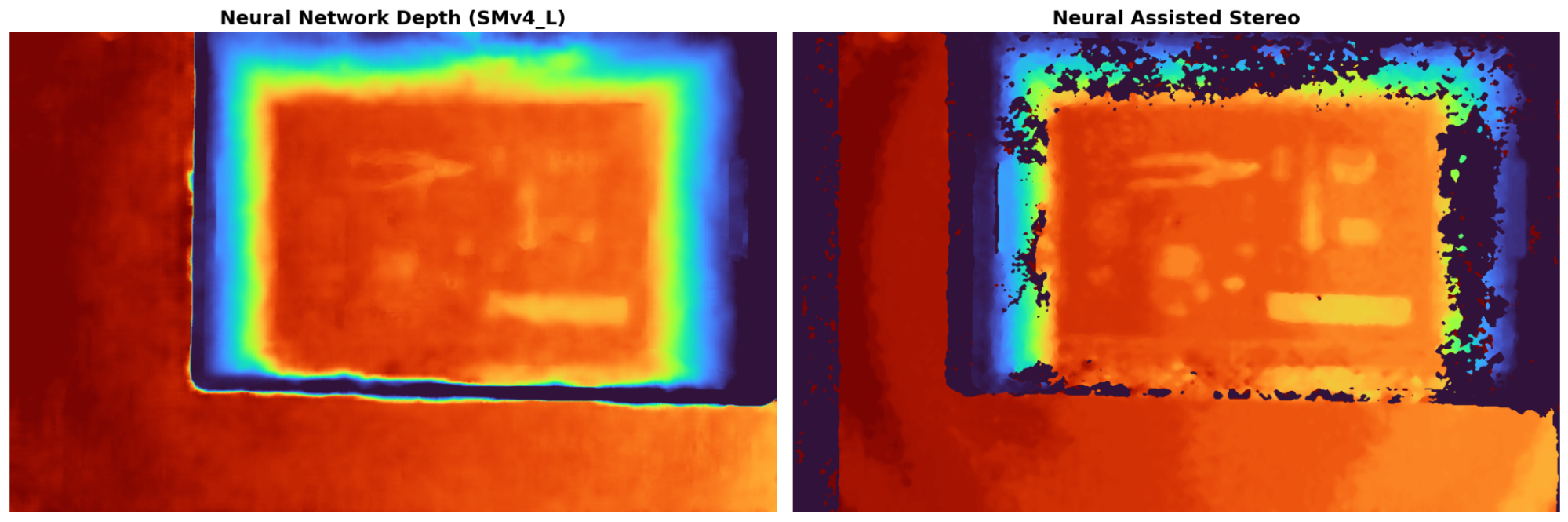

Fill Rate Improvement: Neural-Assisted Stereo (NAS) vs. High Accuracy Profile

Small Object Preservation. The NAS method preserves the detail of small objects, which provides a significant advantage over classical neural stereo approaches that tend to blur original objects.

Try it out for yourself

Clone the depthai-core, install dependencies and with a connected OAK4-D camera run the neural assisted stereo with the following commands:

git clone https://github.com/luxonis/depthai-core.git

cd depthai-core/

python3 -m venv venv # if you are on Linux

source venv/bin/activate # if you are on Linux

python3 examples/python/install_requirements.py

python3 examples/python/NeuralAssistedStereo/neural_assisted_stereo.py

If you had another app running on the camera from before you might get an error:

RuntimeError: No available devices (1 connected, but in use)

In this case you need to stop the running app by first listing them with:

oakctl app list

and then copy the App Id of the App that has status running and stop it with

oakctl app stop INSERT_APP_ID_HERE

where you need to replace INSERT_APP_ID_HERE with your App Id

Conclusion

Neural-Assisted Stereo (NAS) demonstrates that classical and neural approaches don’t have to compete—they can complement each other. By intelligently combining lightweight neural guidance with a proven stereo algorithm, NAS delivers high-quality depth estimation at real-time speed, without overwhelming system resources.

For real-world deployments where performance, accuracy, and efficiency all matter, NAS provides a powerful and practical solution.