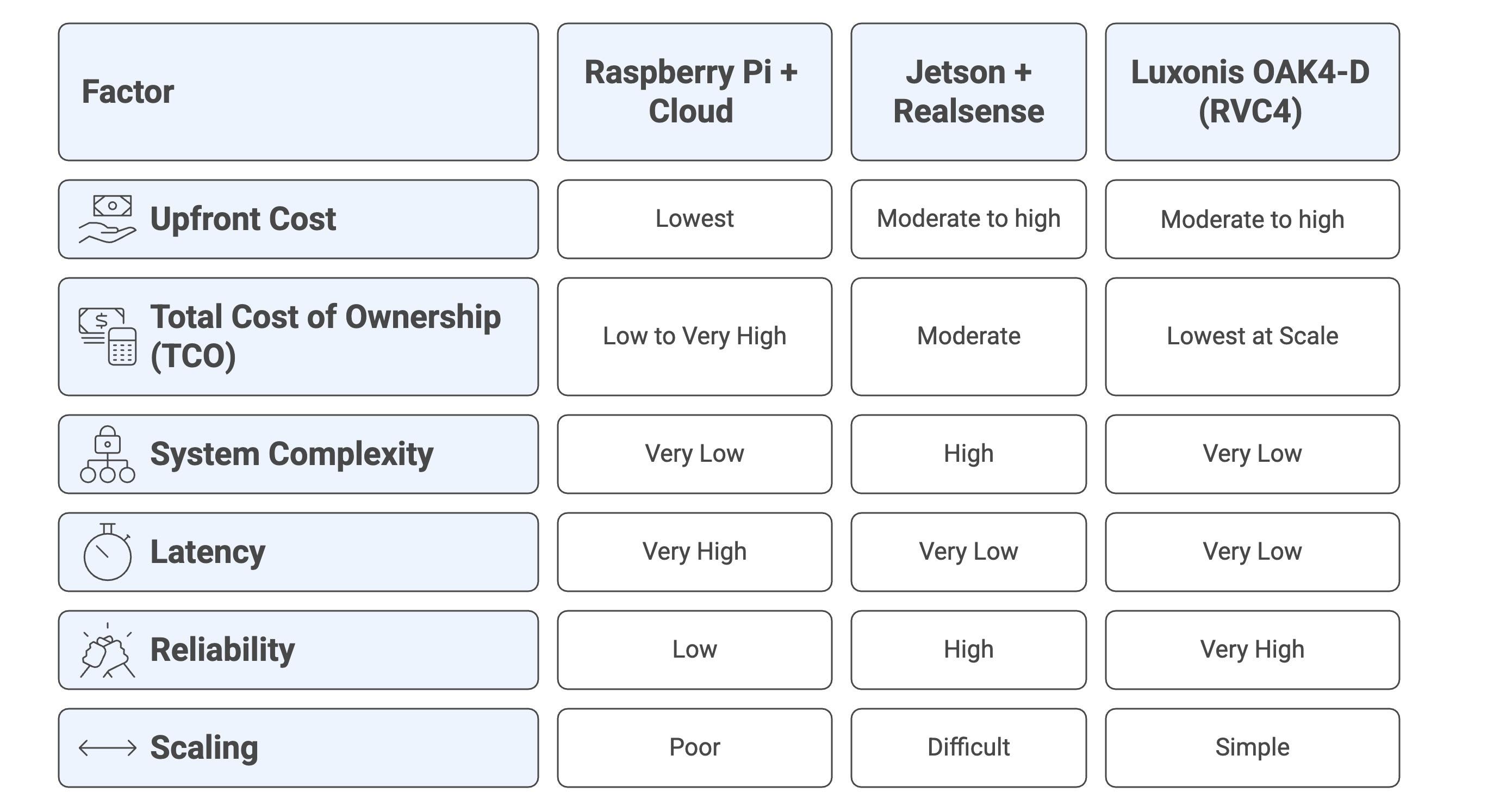

Building a computer vision system requires a critical decision: where should the data be processed, in the cloud or at the edge? Different setups come with significant trade-offs in cost, complexity, latency, and scalability. This guide compares three common vision system architectures across a spectrum of use cases, from simple monitoring to complex robotics. Our goal is to help you understand the compromises and choose the right architecture, while demonstrating how the all-in-one Luxonis OAK4-D (RVC4) often emerges as the simplest and most cost-effective option for robust edge deployments.

The Three Architectures in Focus

We will evaluate the following three approaches, which represent the full spectrum of edge and cloud deployment:

1. Raspberry Pi + Cloud

A low-cost IoT device (e.g., Raspberry Pi with a camera module) that captures images and offloads all processing and AI inference to powerful cloud services (e.g., AWS, GCP).

- Pros: Lowest upfront hardware cost, leverages scalable cloud compute.

- Cons: High latency (network round-trip), dependence on internet connectivity, recurring cloud fees.

2. NVIDIA Jetson + RealSense

An edge computing approach using a powerful single-board computer (Jetson) for heavy AI processing, paired with a 3D depth camera (e.g., Intel RealSense).

Pros: Real-time, low-latency inference on-premise, strong performance, mature depth sensing.

Cons: High integration complexity (two devices, cabling, drivers, synchronization), multi-component hardware cost, higher power consumption.

3. Luxonis OAK4-D

A standalone edge AI camera with an integrated, high-performance computing core (RVC4 chip: 6-core CPU, 52 TOPS AI accelerator). It runs the full computer vision pipeline on-device and requires no external host computer.

Pros: Extremely low complexity (one device), ultra-low latency, simplified scaling, low engineering effort.

Cons: Higher upfront unit cost.

Use Case Comparison: Where Each System Shines (or Fails)

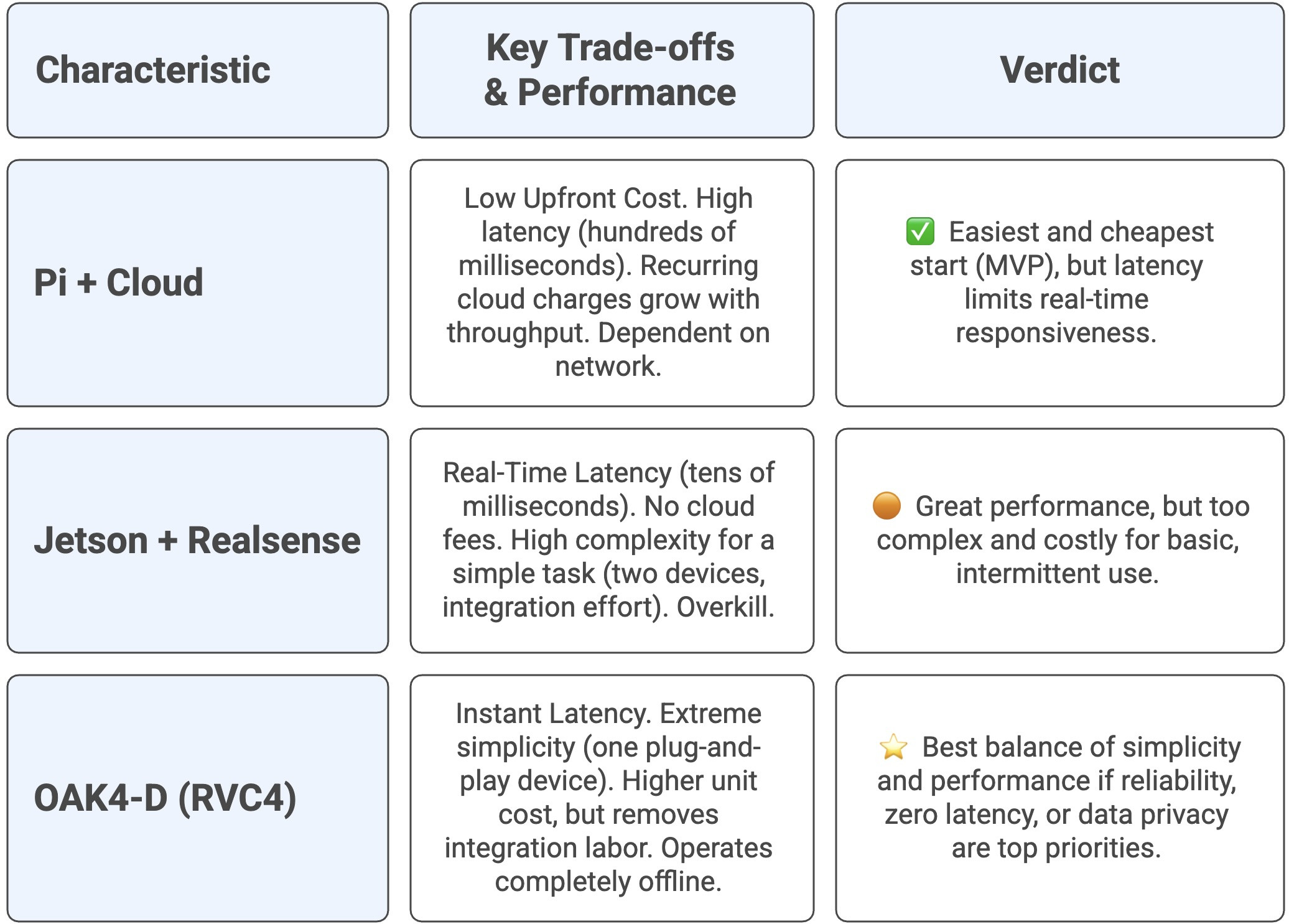

1. Automated Conveyor Snapshot System (Simple)

This task involves taking a snapshot on a trigger and running a simple quality-check or barcode-scan model. In our scenario, approximately 1,000 products per day pass along the conveyor, which means around 1,000 detections and 1,000 requests to the cloud API per day. It is still intermittent and relatively simple on a per-item basis, but the request volume quickly adds up in terms of latency and cost.

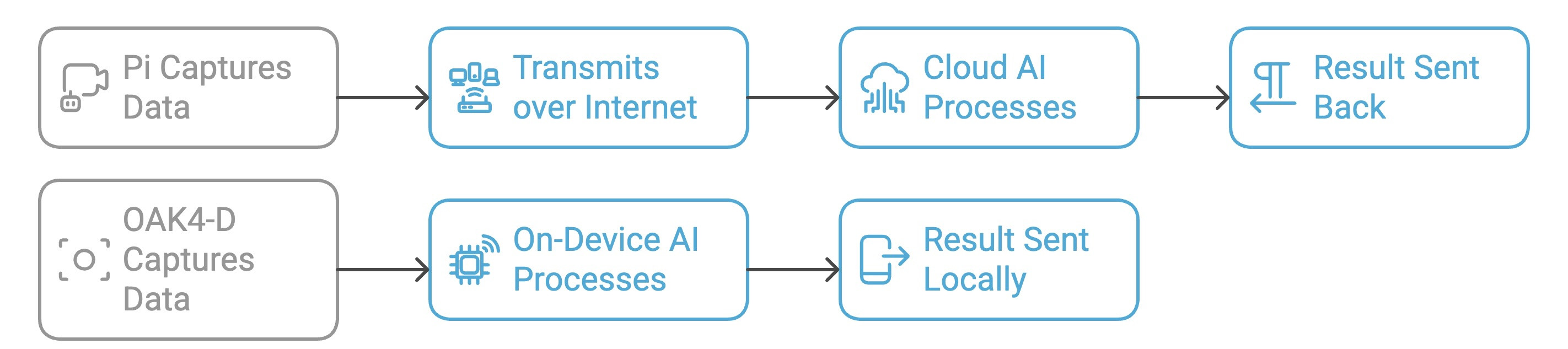

Data processing workflow comparison

The all-in-one design of OAK4-D bypasses the complexity and network dependency of the other two approaches.

For YOLOv8-class models, cloud-based inference can introduce tens to hundreds of milliseconds of network delay. Edge processing with RealSense or OAK4-D keeps inference on-device, providing near-instant, real-time results. These ranges are based on published Raspberry Pi 5 and Jetson YOLOv8 benchmarks.

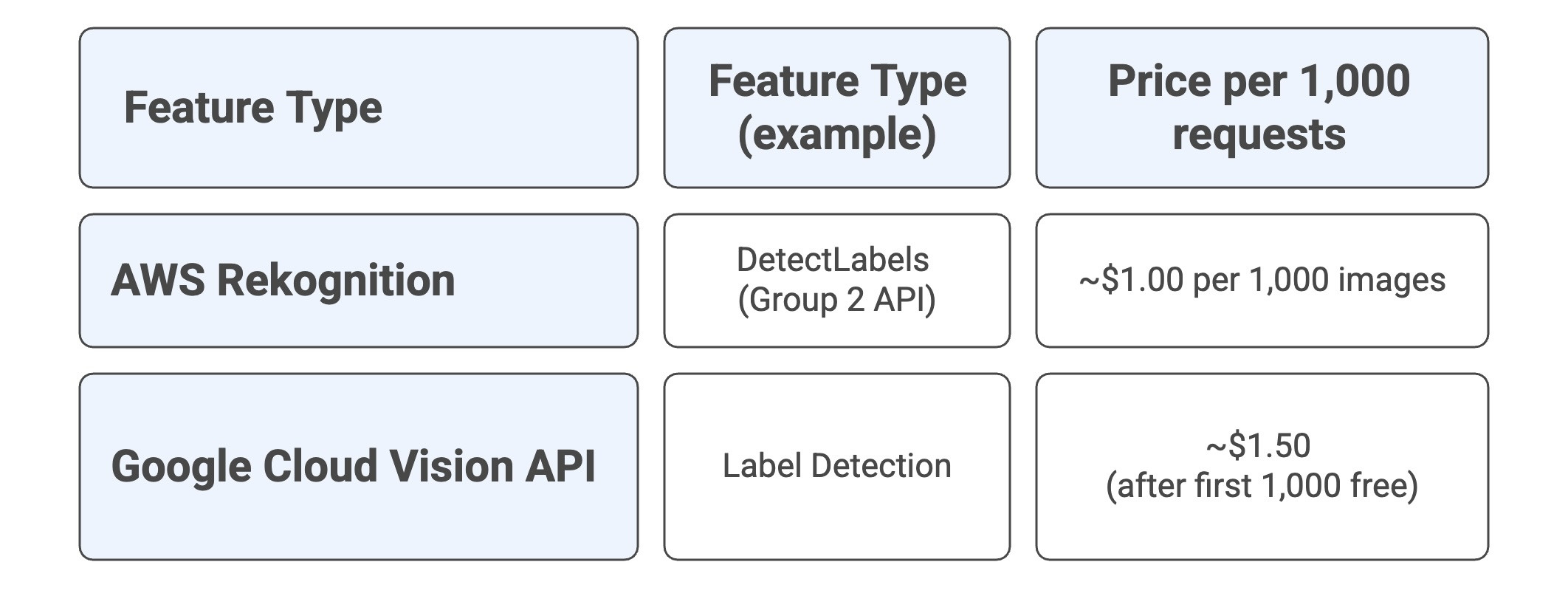

Cloud Inference Cost Comparison: AWS vs Google Cloud Vision

To make the cloud trade-offs more concrete, let’s look at what our 1,000 products per day conveyor scenario means in terms of cloud inference costs. That’s roughly:

We’ll compare two of the most commonly used vision inference APIs for a simple label / barcode-type task

*Prices are base-tier, in USD, and can vary by region and feature. Always double-check the current pricing pages.

For our 30,000 detections/month example (ignoring free tier, storage, network egress, etc.):

AWS Rekognition

- 30,000 images × $0.001 ≈ $30/month in inference fees.

Google Cloud Vision

First 1,000 label units: free.

Remaining 29,000 units × $1.50 / 1,000 ≈ $43.50/month.

So even for a “simple” quality-check / barcode-scan conveyor, cloud inference quickly becomes a recurring Operational Expenses (OpEx) line item in the hundreds of dollars per year at only 1,000 products per day. As throughput grows (more conveyors, more lines, more sites), these per-1,000-request fees scale linearly with your volume, unlike an edge-first approach (e.g., OAK4-D) where your inference cost is effectively capped by the hardware you deploy.

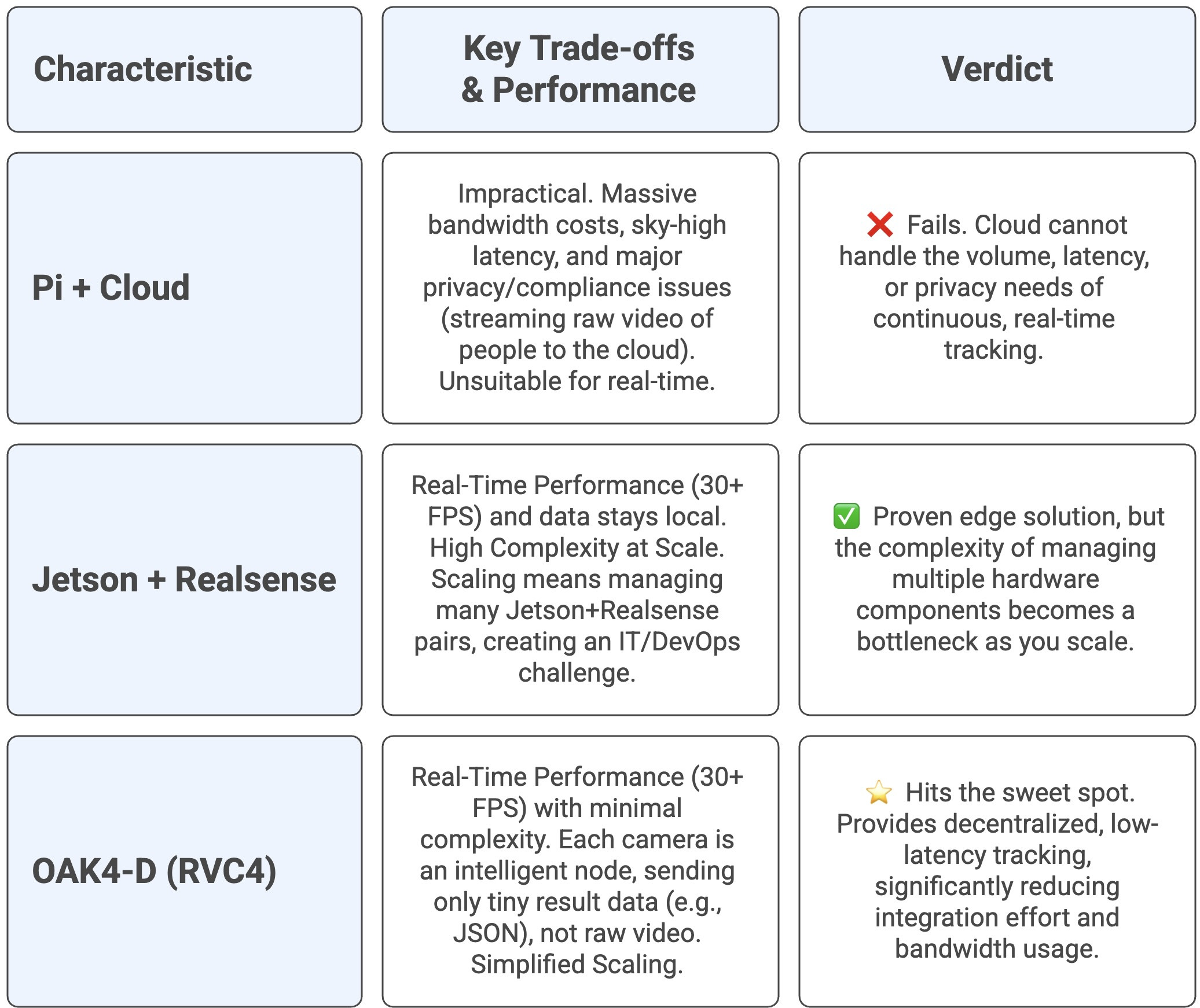

2. In-Store / Factory People & Object Tracking (Moderate Complexity)

This task involves continuous video streaming, running advanced detection and tracking models, and requires real-time alerts.

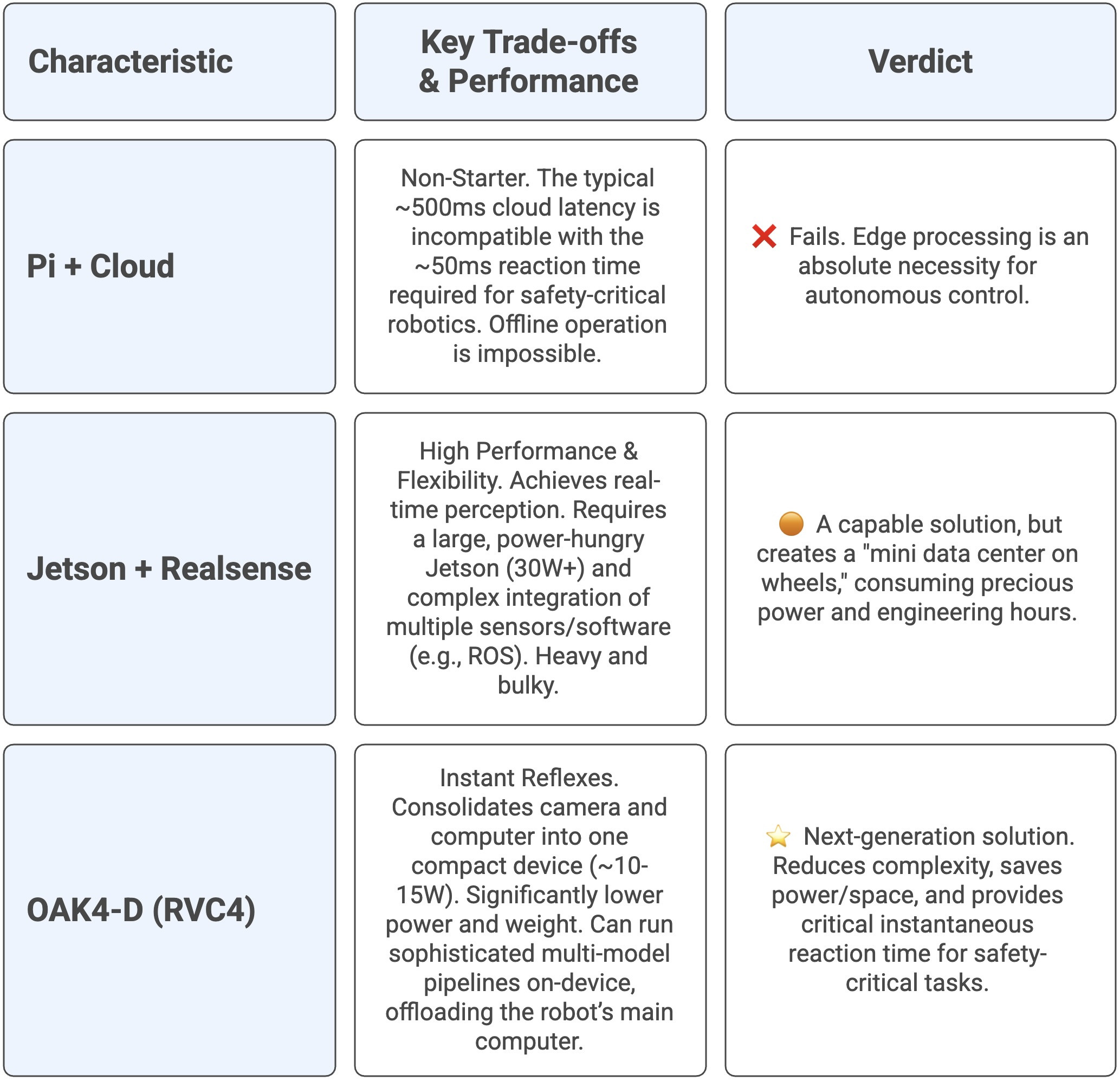

3. Autonomous Warehouse Robot Perception (High Complexity)

A robot needs to perceive its environment in real-time (depth, object detection, SLAM) to navigate safely and must operate offline with instant reaction times.

Conclusion: Weighing the Trade-offs

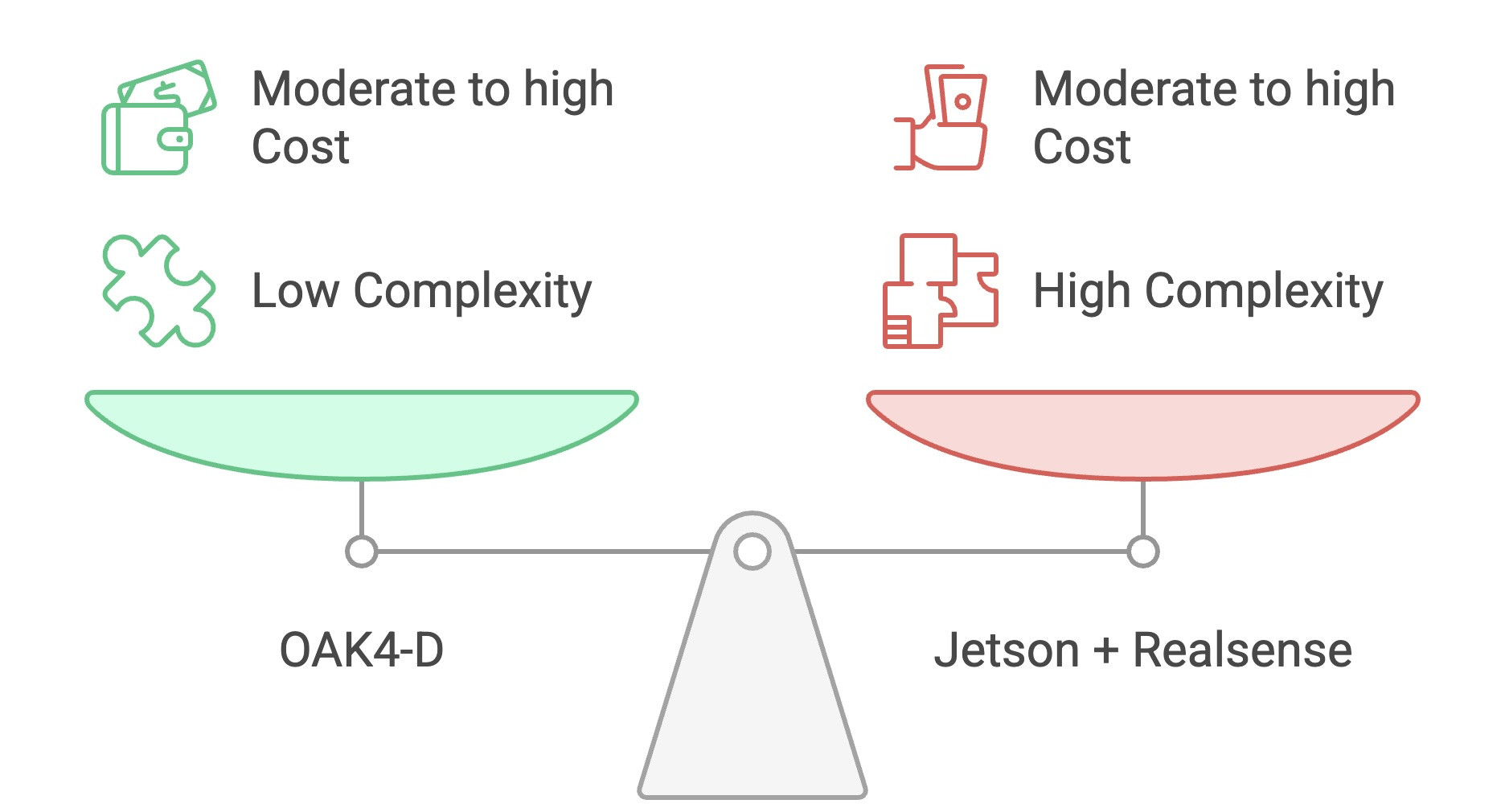

If you want OAK4-D level performance, reliability, and latency, Jetson + RealSense won’t actually be cheaper, just more work.

The choice depends entirely on your needs:

Consider Pi + Cloud only if you absolutely need the lowest upfront hardware cost for a throwaway prototype or a non-critical, low-volume task where latency, reliability, and long-term operating cost do not matter.

Consider Jetson + RealSense only if you have a strong engineering team and a very specific need for maximum flexibility (e.g., fusing lots of heterogeneous sensors, running non-standard GPU workloads), or you need extreme on-device NN inference for advanced robotics/reasoning that requires a top-of-the-line (and expensive) Jetson module, and you’re prepared to handle the complexity, maintenance, and deployment overhead of a multi-device system.

In almost all cases, you should start with OAK4-D (RVC4). It gives you simplicity, reliability, ultra-low latency, and cost-effectiveness at scale. Because the computer is integrated into the camera, it drastically reduces integration effort and long-term engineering costs, more than offsetting the higher unit price.

For industrial and robotic applications that demand real-time performance and reliability, pushing intelligence to the edge is mandatory. The Luxonis OAK4-D is the cutting-edge in this space, making high-performance AI vision systems both powerful and "ridiculously simple."

The OAK4-D’s all-in-one design keeps system complexity as low as the simplest cloud option, offering a massive advantage over the highly complex multi-component Jetson setup.