Luxonis-Adam

Luxonis-Adam

Hi Adam!

I’ve set up my rig (Raspberry Pi 5 + OAK-FFC-4P + 2x AR0234) with a rigid 13cm baseline. I followed your advice a but but needed to make a few changes. We are using CAM_B (Right) and CAM_C (Left), but since I’m using a custom mount, I had to be very specific with my calibration configuration.

Here is exactly what I did, and where I am stuck now (specifically regarding significant map artifacts at range).

- My Calibration Setup

I ran the standard calibrate.py script with this board definition JSON, effectively defining CAM_C (Left) as being 13cm offset from CAM_B (Right).

python3 calibrate.py -s 2.4 -nx 11 -ny 8 -brd custom_oak_ffc_4p.json

custom_oak_ffc_4p.json:

{

"board_config": {

"name": "OAK-FFC-4P",

"cameras": {

"CAM_C": {

"name": "left",

"type": "color",

"hfov": 120,

"extrinsics": {

"to_cam": "CAM_B",

"specTranslation": { "x": 13, "y": 0, "z": 0 },

"rotation": { "r": 0, "p": 0, "y": 0 }

}

},

"CAM_B": {

"name": "right",

"type": "color",

"hfov": 120

}

},

"stereo_config": {

"left_cam": "CAM_C",

"right_cam": "CAM_B"

}

}

}

I then flashed the resulting calibration to the OAK EEPROM. So that's the 39 images you have to take from the board to get the JSON calibration file.

- My Custom ROS2 Bridge

We wrote a custom C++ node instead of using the standard driver. We couldn't or didn't know how we could use the already available ROS OAK Bridge with the 4P FFC.

It loads the calibration blob directly.

It manually computes cv::stereoRectify and sets up ImageManip nodes on the OAK to undistort/rectify the images before they even leave the device.

It publishes exact-sync image_rect messages to ROS2.

#include <chrono>

#include <memory>

#include <string>

#include <vector>

#include <iostream>

#include <thread>

#include <atomic>

#include <mutex>

#include "rclcpp/rclcpp.hpp"

#include "sensor_msgs/msg/image.hpp"

#include "sensor_msgs/msg/camera_info.hpp"

#include "sensor_msgs/msg/imu.hpp"

#include "sensor_msgs/msg/magnetic_field.hpp"

#include <cv_bridge/cv_bridge.hpp>

#include "depthai/depthai.hpp"

#include <opencv2/opencv.hpp>

using namespace std::chrono_literals;

class OakFfcCppNode : public rclcpp::Node {

public:

OakFfcCppNode() : Node("oak_ffc_cpp_node") {

// Parameters

this->declare_parameter("fps", 20);

this->declare_parameter("width", 640);

this->declare_parameter("height", 400);

this->declare_parameter("calib_path", "");

this->declare_parameter("image_type", "color"); // color or mono

fps_ = this->get_parameter("fps").as_int();

width_ = this->get_parameter("width").as_int();

height_ = this->get_parameter("height").as_int();

calib_path_ = this->get_parameter("calib_path").as_string();

image_type_ = this->get_parameter("image_type").as_string();

// Publishers

pub_left_ = this->create_publisher<sensor_msgs::msg::Image>("left/image_rect", 10);

pub_right_ = this->create_publisher<sensor_msgs::msg::Image>("right/image_rect", 10);

pub_left_info_ = this->create_publisher<sensor_msgs::msg::CameraInfo>("left/camera_info", 10);

pub_right_info_ = this->create_publisher<sensor_msgs::msg::CameraInfo>("right/camera_info", 10);

pub_imu_ = this->create_publisher<sensor_msgs::msg::Imu>("imu/data", 10);

pub_mag_ = this->create_publisher<sensor_msgs::msg::MagneticField>("imu/mag", 10);

}

void run() {

pipeline_ = std::make_unique<dai::Pipeline>();

dai::CalibrationHandler calibData;

bool calibLoaded = false;

if (!calib_path_.empty()) {

try {

calibData = dai::CalibrationHandler(calib_path_);

pipeline_->setCalibrationData(calibData);

calibLoaded = true;

RCLCPP_INFO(this->get_logger(), "Loaded calibration from file.");

} catch (const std::exception& e) {

RCLCPP_ERROR(this->get_logger(), "Failed to load calibration file: %s", e.what());

}

}

if (!calibLoaded) {

RCLCPP_FATAL(this->get_logger(), "Calibration not loaded! Node will exit as SLAM requires rectification.");

throw std::runtime_error("Calibration missing");

}

// --- Pipeline Construction ---

auto camLeft = pipeline_->create<dai::node::ColorCamera>();

auto camRight = pipeline_->create<dai::node::ColorCamera>();

camLeft->setBoardSocket(dai::CameraBoardSocket::CAM_C);

camRight->setBoardSocket(dai::CameraBoardSocket::CAM_B);

camLeft->setFps(fps_);

camRight->setFps(fps_);

camLeft->setResolution(dai::ColorCameraProperties::SensorResolution::THE_1200_P);

camRight->setResolution(dai::ColorCameraProperties::SensorResolution::THE_1200_P);

camLeft->setIspScale(1, 3);

camRight->setIspScale(1, 3);

// Limit exposure to 33ms to prevent motion blur during movement

camLeft->initialControl.setAutoExposureLimit(33000);

camRight->initialControl.setAutoExposureLimit(33000);

auto manipLeft = pipeline_->create<dai::node::ImageManip>();

auto manipRight = pipeline_->create<dai::node::ImageManip>();

if (image_type_ == "mono") {

manipLeft->initialConfig.setFrameType(dai::ImgFrame::Type::GRAY8);

manipRight->initialConfig.setFrameType(dai::ImgFrame::Type::GRAY8);

manipLeft->setMaxOutputFrameSize(width_ * height_);

manipRight->setMaxOutputFrameSize(width_ * height_);

} else {

manipLeft->initialConfig.setFrameType(dai::ImgFrame::Type::BGR888i);

manipRight->initialConfig.setFrameType(dai::ImgFrame::Type::BGR888i);

manipLeft->setMaxOutputFrameSize(width_ * height_ * 3);

manipRight->setMaxOutputFrameSize(width_ * height_ * 3);

}

// Generate Mesh

if (calibLoaded) {

std::vector<std::vector<float>> M1_vec = calibData.getCameraIntrinsics(dai::CameraBoardSocket::CAM_C, width_, height_);

std::vector<float> d1_vec = calibData.getDistortionCoefficients(dai::CameraBoardSocket::CAM_C);

std::vector<std::vector<float>> M2_vec = calibData.getCameraIntrinsics(dai::CameraBoardSocket::CAM_B, width_, height_);

std::vector<float> d2_vec = calibData.getDistortionCoefficients(dai::CameraBoardSocket::CAM_B);

std::vector<std::vector<float>> extrinsics = calibData.getCameraExtrinsics(dai::CameraBoardSocket::CAM_C, dai::CameraBoardSocket::CAM_B);

// Convert to OpenCV

cv::Mat M1(3, 3, CV_64F);

for(int i=0; i<3; i++) for(int j=0; j<3; j++) M1.at<double>(i,j) = static_cast<double>(M1_vec[i][j]);

cv::Mat d1(1, d1_vec.size(), CV_64F);

for(size_t i=0; i<d1_vec.size(); i++) d1.at<double>(0,i) = static_cast<double>(d1_vec[i]);

cv::Mat M2(3, 3, CV_64F);

for(int i=0; i<3; i++) for(int j=0; j<3; j++) M2.at<double>(i,j) = static_cast<double>(M2_vec[i][j]);

cv::Mat d2(1, d2_vec.size(), CV_64F);

for(size_t i=0; i<d2_vec.size(); i++) d2.at<double>(0,i) = static_cast<double>(d2_vec[i]);

cv::Mat R(3, 3, CV_64F);

cv::Mat T(3, 1, CV_64F);

for(int i=0; i<3; i++) {

for(int j=0; j<3; j++) R.at<double>(i,j) = static_cast<double>(extrinsics[i][j]);

T.at<double>(i,0) = static_cast<double>(extrinsics[i][3]);

}

// Ensure T[0] is negative (Left -> Right means X_right = X_left - B)

if (T.at<double>(0,0) > 0) {

RCLCPP_WARN(this->get_logger(), "Baseline T[0] is positive (%f). Inverting extrinsics to ensure Left->Right convention.", T.at<double>(0,0));

cv::Mat R_inv = R.t();

cv::Mat T_inv = -R_inv * T;

R = R_inv;

T = T_inv;

RCLCPP_INFO(this->get_logger(), "New Baseline T: %f %f %f", T.at<double>(0,0), T.at<double>(1,0), T.at<double>(2,0));

}

cv::Mat R1, R2, P1, P2, Q;

cv::stereoRectify(M1, d1, M2, d2, cv::Size(width_, height_), R, T, R1, R2, P1, P2, Q, cv::CALIB_ZERO_DISPARITY, 0);

RCLCPP_INFO(this->get_logger(), "Rectification computed.");

RCLCPP_INFO(this->get_logger(), "P1: %f %f %f %f", P1.at<double>(0,0), P1.at<double>(0,1), P1.at<double>(0,2), P1.at<double>(0,3));

RCLCPP_INFO(this->get_logger(), "P2: %f %f %f %f", P2.at<double>(0,0), P2.at<double>(0,1), P2.at<double>(0,2), P2.at<double>(0,3));

P1.convertTo(P1_, CV_32F);

P2.convertTo(P2_, CV_32F);

cv::Mat map1_x, map1_y, map2_x, map2_y;

cv::initUndistortRectifyMap(M1, d1, R1, P1, cv::Size(width_, height_), CV_32FC1, map1_x, map1_y);

cv::initUndistortRectifyMap(M2, d2, R2, P2, cv::Size(width_, height_), CV_32FC1, map2_x, map2_y);

auto [meshLeft, mwLeft, mhLeft] = getMesh(map1_x, map1_y);

auto [meshRight, mwRight, mhRight] = getMesh(map2_x, map2_y);

RCLCPP_INFO(this->get_logger(), "Mesh Left: %d points, %dx%d", (int)meshLeft.size(), mwLeft, mhLeft);

RCLCPP_INFO(this->get_logger(), "Mesh Right: %d points, %dx%d", (int)meshRight.size(), mwRight, mhRight);

manipLeft->setWarpMesh(meshLeft, mwLeft, mhLeft);

manipRight->setWarpMesh(meshRight, mwRight, mhRight);

}

camLeft->isp.link(manipLeft->inputImage);

camRight->isp.link(manipRight->inputImage);

// Sync

auto sync = pipeline_->create<dai::node::Sync>();

sync->setSyncThreshold(std::chrono::milliseconds(10));

manipLeft->out.link(sync->inputs["left"]);

manipRight->out.link(sync->inputs["right"]);

auto xoutSync = pipeline_->create<dai::node::XLinkOut>();

xoutSync->setStreamName("sync");

sync->out.link(xoutSync->input);

auto imu = pipeline_->create<dai::node::IMU>();

imu->enableIMUSensor({dai::IMUSensor::ACCELEROMETER_RAW, dai::IMUSensor::GYROSCOPE_RAW, dai::IMUSensor::MAGNETOMETER_CALIBRATED}, 200);

imu->setBatchReportThreshold(1);

imu->setMaxBatchReports(10);

auto xoutImu = pipeline_->create<dai::node::XLinkOut>();

xoutImu->setStreamName("imu");

imu->out.link(xoutImu->input);

// Start Device

device_ = std::make_unique<dai::Device>(*pipeline_);

// Use Callbacks for lower latency

q_sync_ = device_->getOutputQueue("sync", 4, false);

q_imu_ = device_->getOutputQueue("imu", 50, false);

q_sync_->addCallback(std::bind(&OakFfcCppNode::syncCallback, this, std::placeholders::_1));

q_imu_->addCallback(std::bind(&OakFfcCppNode::imuCallback, this, std::placeholders::_1));

RCLCPP_INFO(this->get_logger(), "OAK-FFC C++ Bridge Started with Callbacks");

// Main Loop just to keep node alive

rclcpp::spin(this->get_node_base_interface());

}

private:

int fps_, width_, height_;

std::string calib_path_, image_type_;

rclcpp::Publisher<sensor_msgs::msg::Image>::SharedPtr pub_left_, pub_right_;

rclcpp::Publisher<sensor_msgs::msg::CameraInfo>::SharedPtr pub_left_info_, pub_right_info_;

rclcpp::Publisher<sensor_msgs::msg::Imu>::SharedPtr pub_imu_;

rclcpp::Publisher<sensor_msgs::msg::MagneticField>::SharedPtr pub_mag_;

std::unique_ptr<dai::Device> device_;

std::unique_ptr<dai::Pipeline> pipeline_;

std::shared_ptr<dai::DataOutputQueue> q_sync_, q_imu_;

sensor_msgs::msg::CameraInfo left_info_, right_info_;

// Calibration matrices

cv::Mat P1_, P2_, R1_, R2_;

struct TimeSync {

rclcpp::Time start_ros;

std::chrono::time_point<std::chrono::steady_clock, std::chrono::steady_clock::duration> start_device;

bool initialized = false;

std::mutex mutex;

};

TimeSync global_sync_;

void imuCallback(std::shared_ptr<dai::ADatatype> data) {

if (auto imuData = std::dynamic_pointer_cast<dai::IMUData>(data)) {

for (const auto& packet : imuData->packets) {

// IMU Message (Accel + Gyro)

sensor_msgs::msg::Imu msg;

auto ts = packet.acceleroMeter.timestamp.get();

// Get ROS time

rclcpp::Time ros_time = this->getRosTime(ts, global_sync_);

msg.header.stamp = ros_time;

msg.header.frame_id = "oak_imu_frame";

// Rotation (-90 deg Z fix)

msg.linear_acceleration.x = packet.acceleroMeter.y;

msg.linear_acceleration.y = -packet.acceleroMeter.x;

msg.linear_acceleration.z = packet.acceleroMeter.z;

msg.angular_velocity.x = packet.gyroscope.y;

msg.angular_velocity.y = -packet.gyroscope.x;

msg.angular_velocity.z = packet.gyroscope.z;

pub_imu_->publish(msg);

// Mag Message

sensor_msgs::msg::MagneticField mag_msg;

mag_msg.header.stamp = ros_time;

mag_msg.header.frame_id = "oak_imu_frame";

// Convert uT to Tesla (1 uT = 1e-6 T)

const float uT_to_T = 1e-6;

mag_msg.magnetic_field.x = packet.magneticField.x * uT_to_T;

mag_msg.magnetic_field.y = packet.magneticField.y * uT_to_T;

mag_msg.magnetic_field.z = packet.magneticField.z * uT_to_T;

pub_mag_->publish(mag_msg);

}

}

}

void syncCallback(std::shared_ptr<dai::ADatatype> data) {

if (auto syncData = std::dynamic_pointer_cast<dai::MessageGroup>(data)) {

auto inLeft = syncData->get<dai::ImgFrame>("left");

auto inRight = syncData->get<dai::ImgFrame>("right");

if (inLeft && inRight) {

auto ts = inLeft->getTimestamp();

auto timestamp = this->getRosTime(ts, global_sync_);

std::string encoding = (image_type_ == "color") ? "bgr8" : "mono8";

// Process Left

cv::Mat frameLeft;

if (inLeft->getType() == dai::ImgFrame::Type::GRAY8) {

frameLeft = cv::Mat(inLeft->getHeight(), inLeft->getWidth(), CV_8UC1, inLeft->getData().data());

} else {

frameLeft = cv::Mat(inLeft->getHeight(), inLeft->getWidth(), CV_8UC3, inLeft->getData().data());

}

auto msgLeft = cv_bridge::CvImage(std_msgs::msg::Header(), encoding, frameLeft).toImageMsg();

msgLeft->header.stamp = timestamp;

msgLeft->header.frame_id = "oak_left_frame";

pub_left_->publish(*msgLeft);

auto infoLeft = getCameraInfo(dai::CameraBoardSocket::CAM_C, timestamp);

pub_left_info_->publish(infoLeft);

// Process Right

cv::Mat frameRight;

if (inRight->getType() == dai::ImgFrame::Type::GRAY8) {

frameRight = cv::Mat(inRight->getHeight(), inRight->getWidth(), CV_8UC1, inRight->getData().data());

} else {

frameRight = cv::Mat(inRight->getHeight(), inRight->getWidth(), CV_8UC3, inRight->getData().data());

}

auto msgRight = cv_bridge::CvImage(std_msgs::msg::Header(), encoding, frameRight).toImageMsg();

msgRight->header.stamp = timestamp;

msgRight->header.frame_id = "oak_right_frame";

pub_right_->publish(*msgRight);

auto infoRight = getCameraInfo(dai::CameraBoardSocket::CAM_B, timestamp);

pub_right_info_->publish(infoRight);

}

}

}

// Helper to generate mesh for rectification

std::tuple<std::vector<dai::Point2f>, int, int> getMesh(const cv::Mat& mapX, const cv::Mat& mapY) {

std::vector<dai::Point2f> mesh;

int meshCellSize = 16;

int width = mapX.cols;

int height = mapX.rows;

for (int y = 0; y <= height; y++) {

if (y % meshCellSize == 0) {

for (int x = 0; x < width; x++) {

if (x % meshCellSize == 0) {

float mx, my;

if (y == height) {

mx = mapX.at<float>(y - 1, x);

my = mapY.at<float>(y - 1, x);

} else {

mx = mapX.at<float>(y, x);

my = mapY.at<float>(y, x);

}

mesh.push_back({mx, my});

}

}

if ((width % meshCellSize) % 2 != 0) mesh.push_back({0.0f, 0.0f});

}

}

int meshWidth = (width / meshCellSize);

if ((width % meshCellSize) % 2 != 0) meshWidth++;

int meshHeight = (height / meshCellSize) + 1;

return {mesh, meshWidth, meshHeight};

}

rclcpp::Time getRosTime(std::chrono::time_point<std::chrono::steady_clock, std::chrono::steady_clock::duration> t, TimeSync& sync) {

std::lock_guard<std::mutex> lock(sync.mutex);

if (!sync.initialized) {

sync.start_ros = this->now();

sync.start_device = t;

sync.initialized = true;

RCLCPP_INFO(this->get_logger(), "TimeSync initialized. ROS: %f", sync.start_ros.seconds());

}

auto diff = t - sync.start_device;

int64_t ns = std::chrono::duration_cast<std::chrono::nanoseconds>(diff).count();

return sync.start_ros + rclcpp::Duration::from_nanoseconds(ns);

}

sensor_msgs::msg::CameraInfo getCameraInfo(dai::CameraBoardSocket socket, rclcpp::Time stamp) {

sensor_msgs::msg::CameraInfo info;

info.header.stamp = stamp;

info.header.frame_id = (socket == dai::CameraBoardSocket::CAM_C) ? "oak_left_frame" : "oak_right_frame";

info.width = width_;

info.height = height_;

info.distortion_model = "plumb_bob";

info.d = {0,0,0,0,0};

info.r = {1,0,0,0,1,0,0,0,1};

cv::Mat P = (socket == dai::CameraBoardSocket::CAM_C) ? P1_ : P2_;

if (!P.empty()) {

for(int i=0; i<12; i++) info.p[i] = P.at<float>(i/4, i%4);

float tx = info.p[3];

info.p[3] = tx / 100.0;

for(int i=0; i<3; i++) for(int j=0; j<3; j++) info.k[i*3+j] = P.at<float>(i,j);

}

return info;

}

};

int main(int argc, char** argv) {

rclcpp::init(argc, argv);

try {

auto node = std::make_shared<OakFfcCppNode>();

node->run();

} catch (const std::exception& e) {

std::cerr << "Node failed: " << e.what() << std::endl;

}

rclcpp::shutdown();

return 0;

}

- My RTAB-Map Launch

I feed these rectified images into RTAB-Map on ROS2 Jazzy.

import os

from ament_index_python.packages import get_package_share_directory

from launch import LaunchDescription

from launch.actions import DeclareLaunchArgument, IncludeLaunchDescription

from launch.launch_description_sources import PythonLaunchDescriptionSource

from launch.substitutions import LaunchConfiguration

from launch_ros.actions import Node

def generate_launch_description():

# Arguments

use_sim_time = LaunchConfiguration('use_sim_time', default='false')

# Find calibration file

try:

pkg_share_rtabmap = get_package_share_directory('oak_ffc_rtabmap')

calib_path = os.path.join(pkg_share_rtabmap, 'config', 'calibration.json')

except Exception:

calib_path = ""

# OAK-FFC C++ Bridge Node

oak_bridge_node = Node(

package='oak_ffc_cpp',

executable='oak_bridge_cpp',

name='oak_bridge',

output='screen',

parameters=[{

'fps': 30,

'width': 640,

'height': 400,

'image_type': 'mono', # 'color' or 'mono'

'calib_path': calib_path

}]

)

# IMU Filter Madgwick (Main - 9 Axis with Mag)

imu_filter_node = Node(

package='imu_filter_madgwick',

executable='imu_filter_madgwick_node',

name='imu_filter',

output='screen',

parameters=[{

'use_mag': True,

'publish_tf': False,

'world_frame': 'enu',

'fixed_frame': 'base_link',

'gain': 0.1

}],

remappings=[

('/imu/data_raw', '/imu/data'),

('/imu/data', '/imu/data_filtered'),

('/imu/mag', '/imu/mag')

]

)

tf_base_to_imu = Node(

package='tf2_ros',

executable='static_transform_publisher',

name='base_to_imu',

arguments=['--x', '0', '--y', '0', '--z', '0',

'--yaw', '0', '--pitch', '0', '--roll', '0',

'--frame-id', 'base_link', '--child-frame-id', 'oak_imu_frame']

)

# Static TF: base_link -> oak_left_frame

tf_base_to_left = Node(

package='tf2_ros',

executable='static_transform_publisher',

name='base_to_left',

arguments=['--x', '0', '--y', '0.0375', '--z', '0',

'--yaw', '-1.5707', '--pitch', '0', '--roll', '-1.5707',

'--frame-id', 'base_link', '--child-frame-id', 'oak_left_frame']

)

# RTAB-Map Arguments

rtabmap_parameters = [

'--delete_db_on_start',

'--Rtabmap/DetectionRate 8.0',

]

rtabmap_args_str = ' '.join(rtabmap_parameters)

rtabmap_launch = IncludeLaunchDescription(

PythonLaunchDescriptionSource([

os.path.join(get_package_share_directory('rtabmap_launch'), 'launch', 'rtabmap.launch.py')

]),

launch_arguments={

'rtabmap_args': rtabmap_args_str,

'stereo': 'true',

'frame_id': 'base_link',

'subscribe_depth': 'false',

'subscribe_rgb': 'false',

'subscribe_scan': 'false',

'approx_sync': 'true',

'wait_for_transform': '0.2',

# Topic Remappings

'left_image_topic': '/left/image_rect',

'right_image_topic': '/right/image_rect',

'left_camera_info_topic': '/left/camera_info',

'right_camera_info_topic': '/right/camera_info',

'imu_topic': '/imu/data_filtered',

'wait_imu_to_init': 'true',

'qos': '1'

}.items()

)

# Path Predictor (Visualizes velocity vector)

path_predictor_node = Node(

package='oak_ffc_cpp',

executable='predict_path.py',

name='path_predictor',

output='screen'

)

return LaunchDescription([

oak_bridge_node,

imu_filter_node,

tf_base_to_imu,

tf_base_to_left,

rtabmap_launch,

path_predictor_node

])

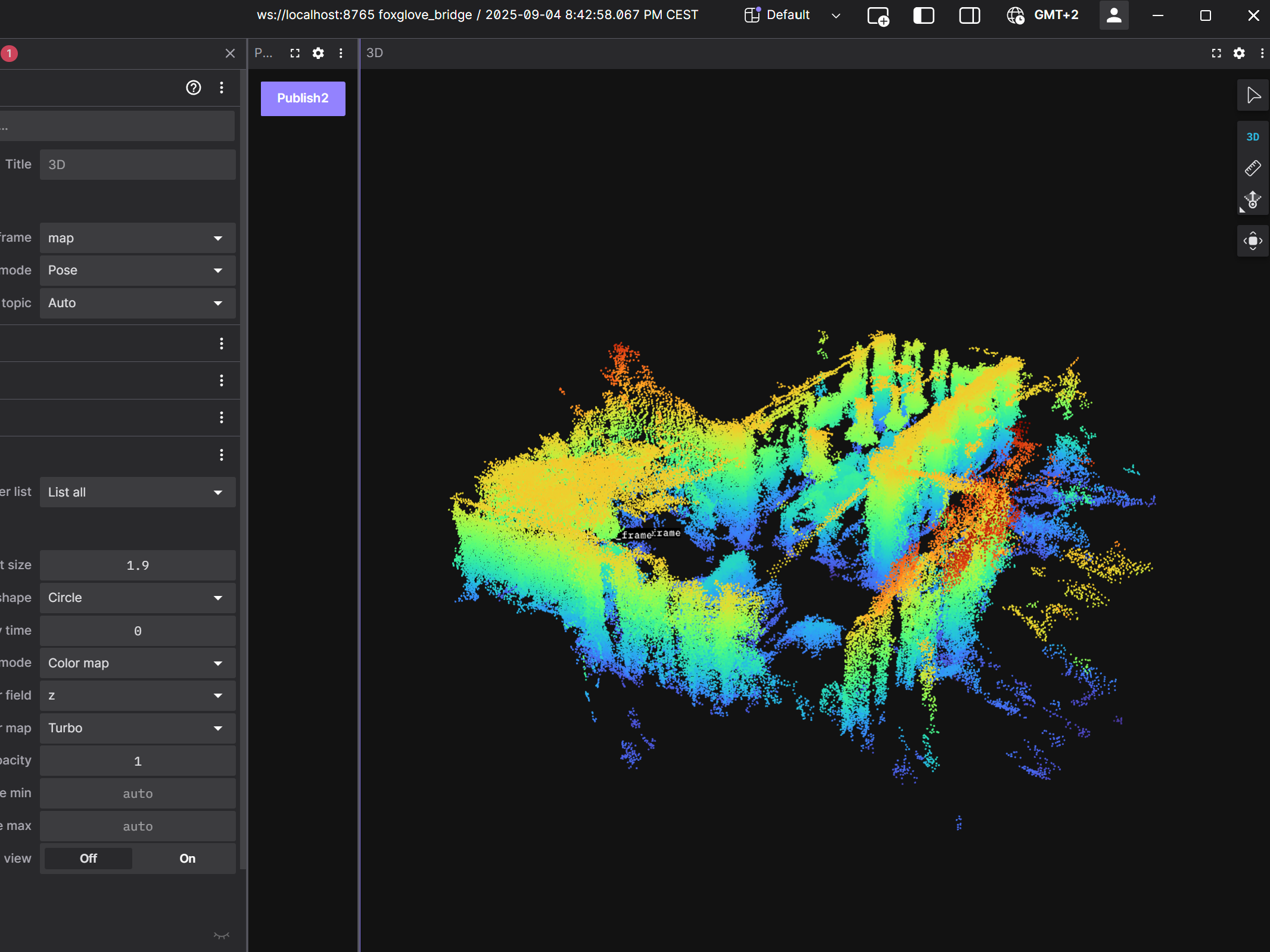

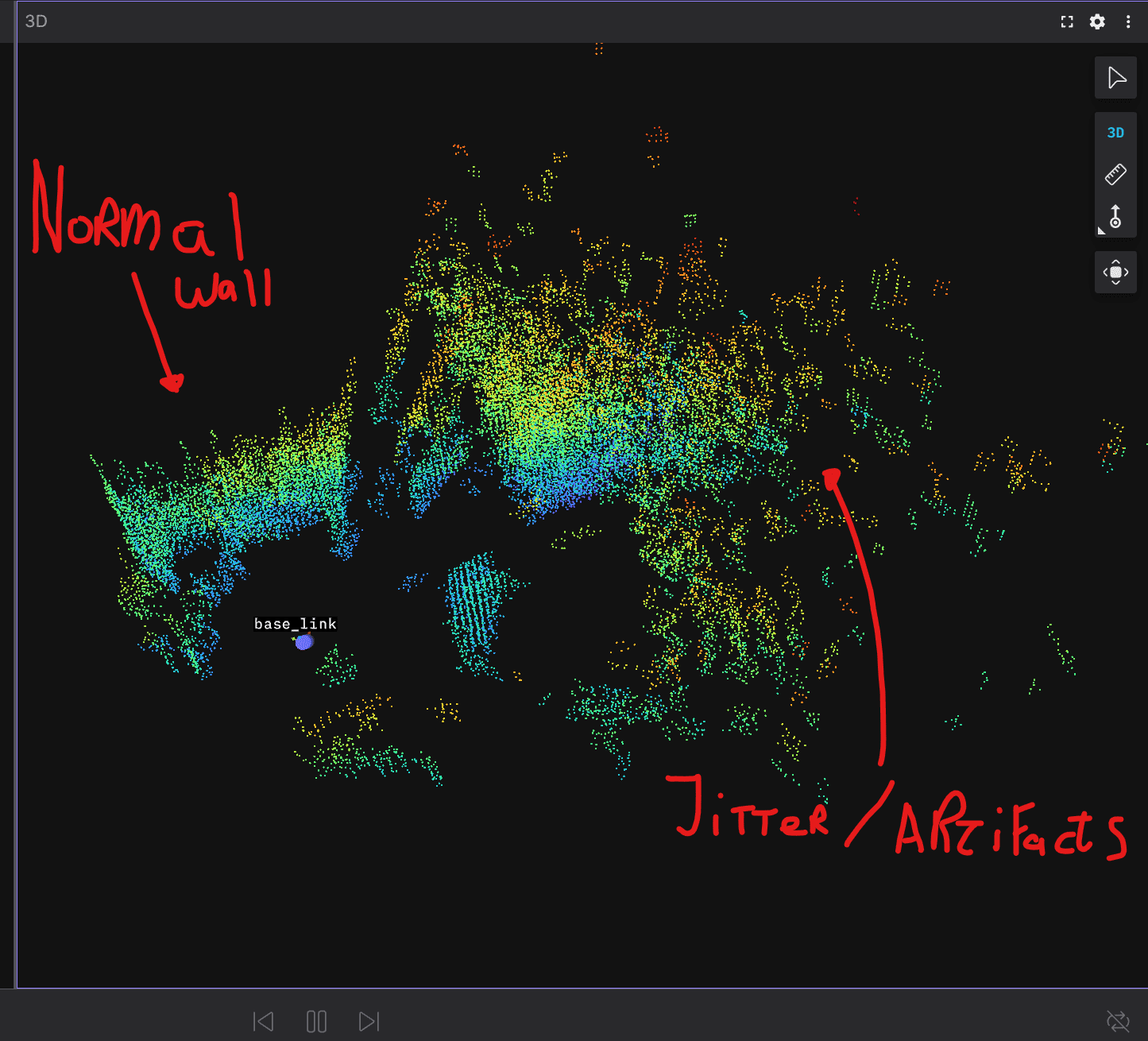

The Problem: Double Walls / Ghosting

So, RTAB-Map works! It builds a map and creates a valid 3D cloud. The Bad News: I am seeing severe "double wall" artefacts for objects further than 3 meters away.

Close objects look fine. Distant walls or pillars appear as duplicate surfaces offset by maybe 20-50cm in the map.

I moved to this setup from an Intel RealSense D456 specifically because I needed a wider baseline (13cm+ vs 9.5cm) for better long-range accuracy. The D456 mapped these walls perfectly with the same rtabmap launch file (just different topics and driver). With my custom OAK-FFC setup, the wider baseline seems to be causing issues rather than solving them.

My Questions:

Is my calibration approach correct? Does calibrate.py / stereoRectify handle these wide-angle M12 lenses the standard M12 lens that comes with the AR0234 (Fisheye model vs Pinhole) correctly at this baseline?

Why the ghosting? Is this a symptom of a slight extrinsic calibration error (rotation) being amplified by the wider baseline?

Synchronisation: I have approx_sync: true in RTAB-Map, but my bridge enforces heavy sync. Should I force approx_sync: false to prevent the visual odometry from drifting due to jitter?

Any advice on solving these long-range artefacts would be massively appreciated. I feel like I'm 95% there, but this last geometric error is killing the map quality. Oh and also im using version 2.30.0 of DepthAI I understood that that version is better compatible with the 4P FFC? Or can I use something like

introlab/rtabmap_rosblob/ros2/rtabmap_examples/launch/depthai.launch.py

and

luxonis/depthai-rosblob/jazzy/depthai_examples/launch/stereo_inertial_node.launch.py ? Or is that not possible with the 4P FFC?

Thank you!

The OAK FFC:

The D456: