Luxonis DepthAI and megaAI | Overview and Status

- Edited

Today, our team is excited to release to you the OpenCV AI Kit, OAK, a modular, open-source ecosystem composed of MIT-licensed hardware, software, and AI training - that allows you to embed Spatial AI and CV super-powers into your product.

And best of all, you can buy this complete solution today and integrate it into your product tomorrow.

Back our campaign today!

So we're now heads-down designing and prototyping the aluminum enclosures we promised to our KickStarter backers as a result of hitting our $1-million-raise stretch goal. Some in-progress photos.

Most of the work involves making sure that the designs are thermally sound - given that they fully encase the heat-generator.

We were able to make a mode (for both OAK-1 and OAK-D) that uses more power in the chip than we've ever been able to make it use previously. And we used this mode for all the testing, so the thermal results below are for the absolute-worst-case power use of the part (or as close as we could get to it).

OAK-1:

We did a quick/dirty version to test thermal limits. It looks pretty good.

Our calculations estimated that at this max power use that the max surface temperature would be about 60C (the max recommended for metallic surfaces). And it came out to about that, at 61.3C:

To make sure the maximum surface temperature is below this 60C max recommendation, we increased the heat-sinking and size a bit, from a total thickness of 2.4cm top 2.7cm, and added some vertical aspects as well:

Prototypes of that will likely be in next week. We expect to see sub-60C external temperatures.

Even with the initial/smaller enclosure, we are seeing a max die temperature of less than 75C, and the max safe die temperature is 105C, so this gives nice margin.

Our first example/reference for using the Gen2 Pipeline Builder system is now live!

https://github.com/luxonis/depthai-experiments/pull/8

And we have our first aluminum enclosure samples for BW1093 and BW1098OBC:

Hi DepthAI Backers!

Thanks again for all the continued support and interest in the platform.

So we've been hard at work adding a TON of DepthAI functionalities. You can track a lot of the progress in the following Github projects:

- Gen1 Feature-Complete: https://github.com/orgs/luxonis/projects/3

- Gen2 December-Delivery: https://github.com/orgs/luxonis/projects/2

- Gen2 2021 Efforts: (Some are even already in progress) https://github.com/orgs/luxonis/projects/4

As you can see, there are a TON of features we have released since the last update. Let's highlight a few below:

RGB-Depth Alignment

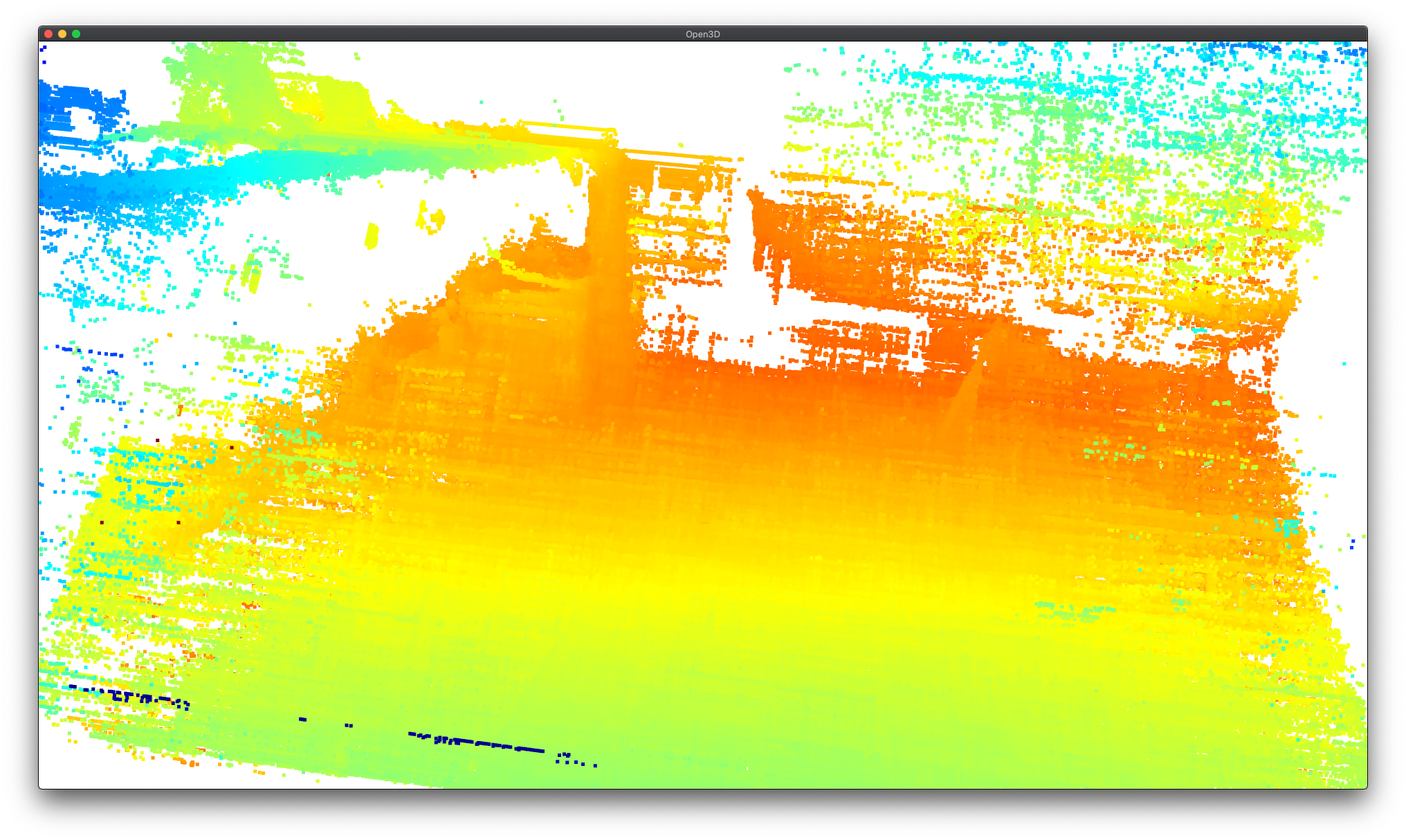

We have the calibration stage working now. And future DepthAI builds (after this writing) are actually having RGB-right calibration performed. An example with semantic segmentation is shown below:

The right grayscale camera is shown on the right and the RGB is shown on the left. You can see the cameras are slightly different aspect ratios and fields of view, but the semantic segmentation is still properly applied. More details on this, and to track progress, see our Github issue on this feature here: https://github.com/luxonis/depthai/issues/284

Subpixel Capability

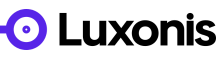

DepthAI now supports subpixel. To try it out yourself, use the example here. And see below for my quickly using this at my desk:

Host Side Depth Capability

We also now allow performing depth estimation from images sent from the host. This is very convenient for test/validation - as stored images can be used. And along with this, we now support outputting the rectified-left and rectified-right, so they can be stored and later used with DepthAI's depth engine in various CV pipelines.

See here on how to do this with your DepthAI model. And see some examples below from the MiddleBury stereo dataset:

For the bad looking areas, these are caused by the objects being too close to the camera for the given baseline, exceeding the 96 pixels max distance for disparity matching (StereoDepth engine constraint):

These areas will be improved with extended = True, however Extended Disparity and Subpixel cannot operate both at the same time.

RGB Focus, Exposure, and Sensitivity Control

We also added the capability (and examples on how to use) manual focus, exposure, and sensitivity controls. See here for how to use these controls.

Here is an example of increasing the exposure time:

And here is setting it quite low:

It's actually fairly remarkable how well the neural network still detects me as a person even when the image is this dark.

Pure Embedded DepthAI

We mentioned in our last update (here), we mentioned that we were making a pure-embedded DepthAI.

We made it. Here's the initial concept:

And here it is working!

And here it is on a wrist to give a reference of its size:

And eProsima even got microROS running on this with DepthAI, exporting results over WiFi back to RViz:

RPi Compute Module 4

We're quite excited about this one. We're fairly close to ordering it. Some initial views in Altium below:

There's a bunch more, but we'll leave you with our recent interview with Chris Gammel at the Amp Hour!

https://theamphour.com/517-depth-and-ai-with-brandon-gilles-and-brian-weinstein/

Cheers,

Brandon & The Luxonis Team

- Edited

It's cool to see the other safety products that are being built off of this. For example recently BlueBox Labs released their collision-deterring (among many other features) spatial AI dashcamera based on DepthAI on KickStarter:

So this could work inside the vehicle to even detect and alert if a (distracted) driver is going to hit a person riding a bike or a pedestrian.

More safety products are in the works. Very satisfying to see.

Cheers,

Brandon

POE is now fully supported by DepthAI, including 2x IP67 DepthAI Models:

https://docs.luxonis.com/en/latest/pages/tutorials/getting-started-with-poe/

Our lowest-cost model launched late last year and has been shipping for a while now.

Our next gen is also releasing now. OAK-D Series 2.

And these are available for early-adopters on our Beta store:

https://shop.luxonis.com/collections/beta-store